Finite elements can handle complex geometry, adaptive meshes, higher-order approximations and has a firm theory

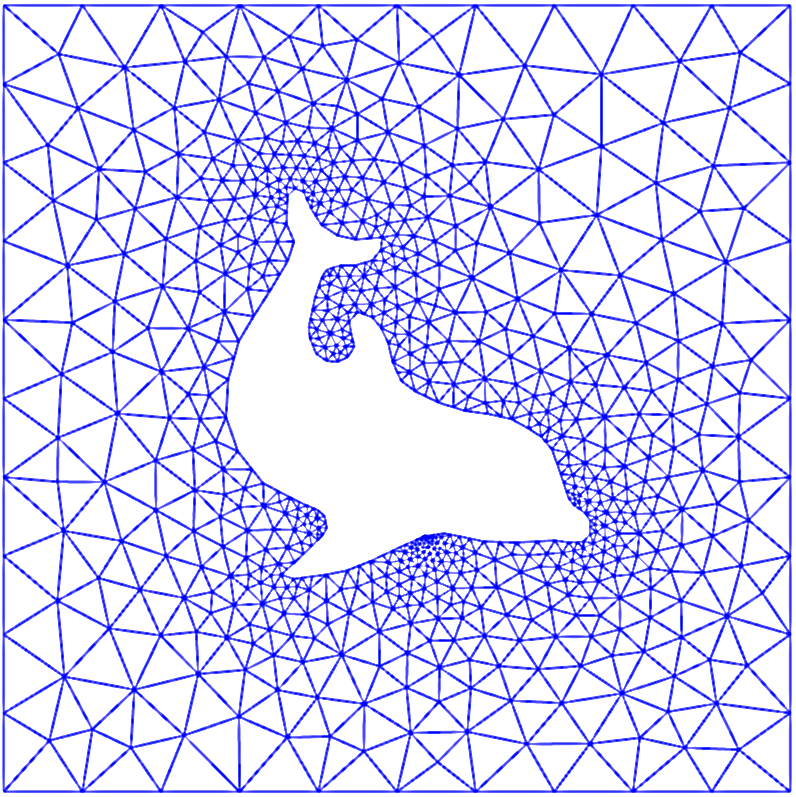

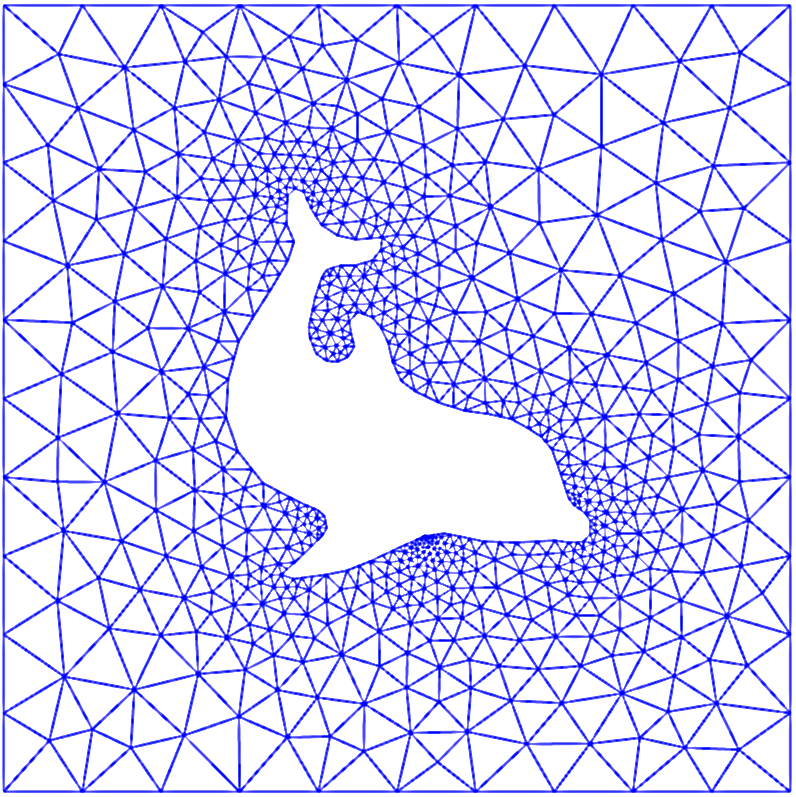

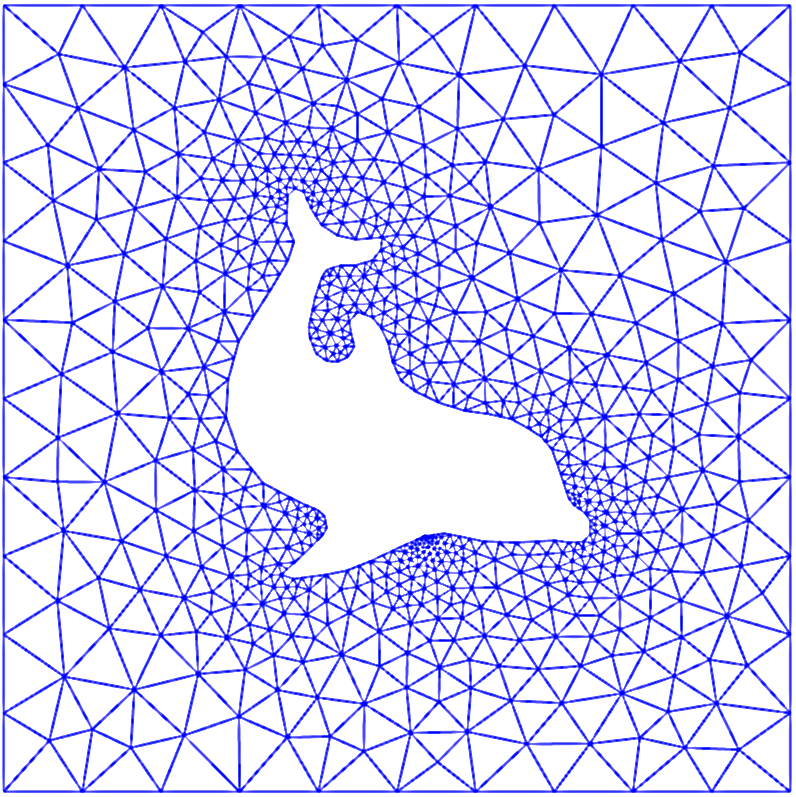

A dolphin and its flow

Solving PDEs by the finite element method

We start with function approximation, then we treat PDEs

Find a vector in some space that approximates a given vector in a space of higher dimension

The approximation is a linear combination of prescribed basis functions

We have three methods to determine the coefficients

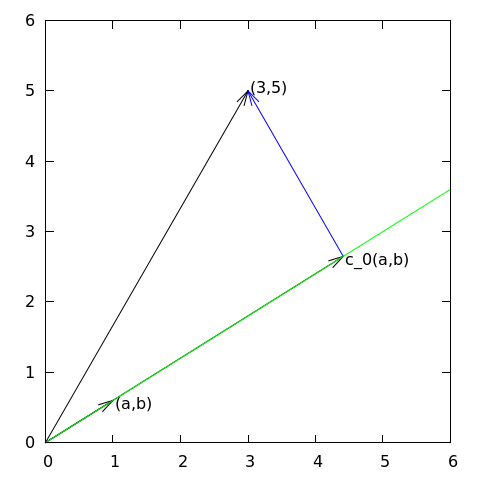

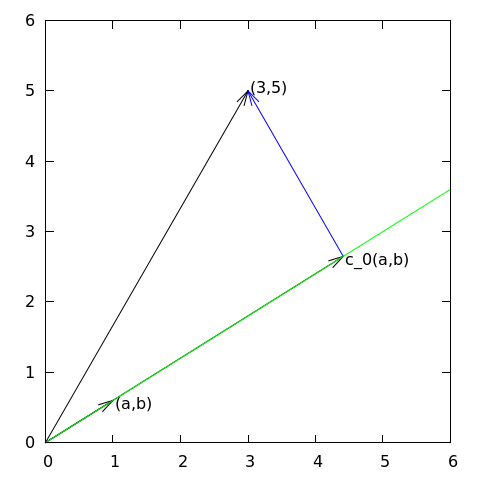

Approximation of planar vectors; problem

Approximation of planar vectors; vector space terminology

The least squares method; principle

The least squares method; calculations

The projection (or Galerkin) method

Approximation of general vectors

The least squares method

The projection (or Galerkin) method

Approximation of a function in a function space

The least squares method can be extended from vectors to functions

The least squares method; details

The projection (or Galerkin) method

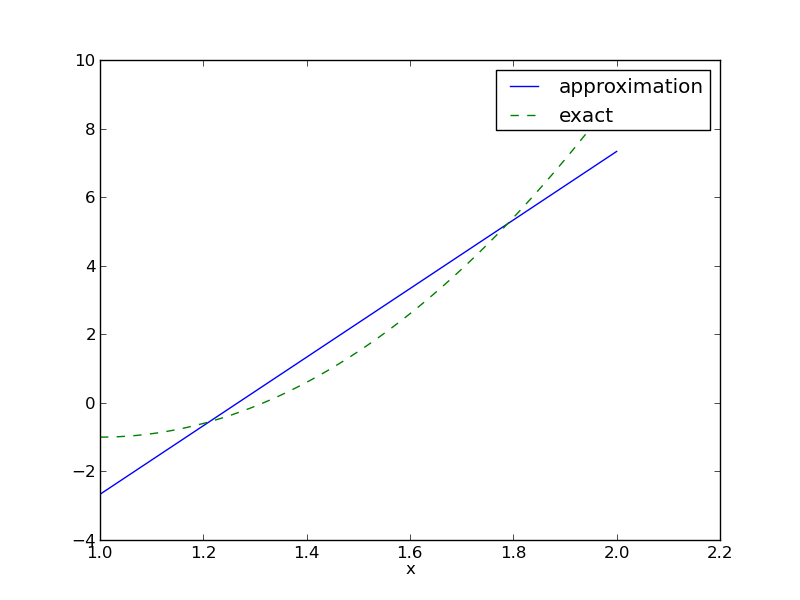

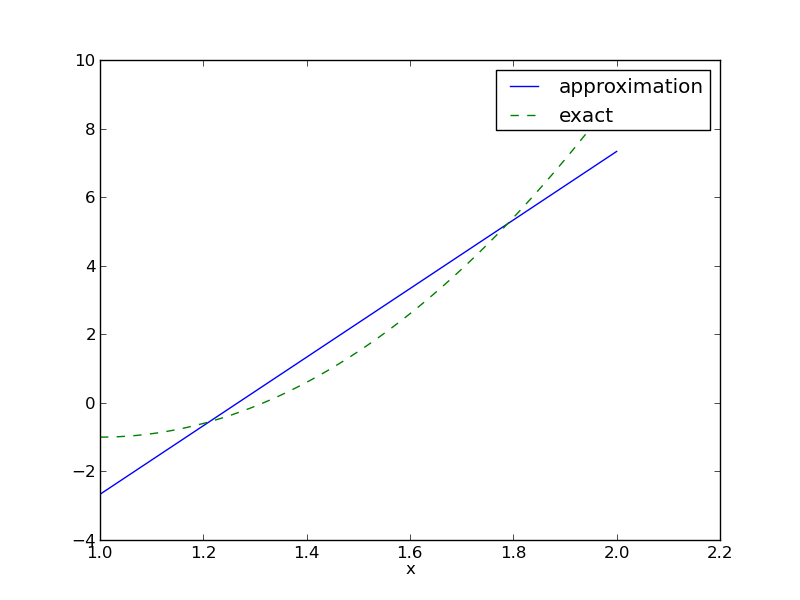

Example: fit a parabola by a straight line; problem

Example: fit a parabola by a straight line; solution

Example: fit a parabola by a straight line; plot

Ideas for implementing the least squares method via symbolic computations

Basic symbolic (SymPy) code for least squares

Improved code if symbolic integration fails

Plotting of the solution

Application of the software: fit a parabola by a straight line

The approximation is exact if \( f\in V \)

The general result: perfect approximation if \( f\in V \)

Proof of why \( f\in V \) gives exact \( u \)

Finite-precision in numerical computations; question

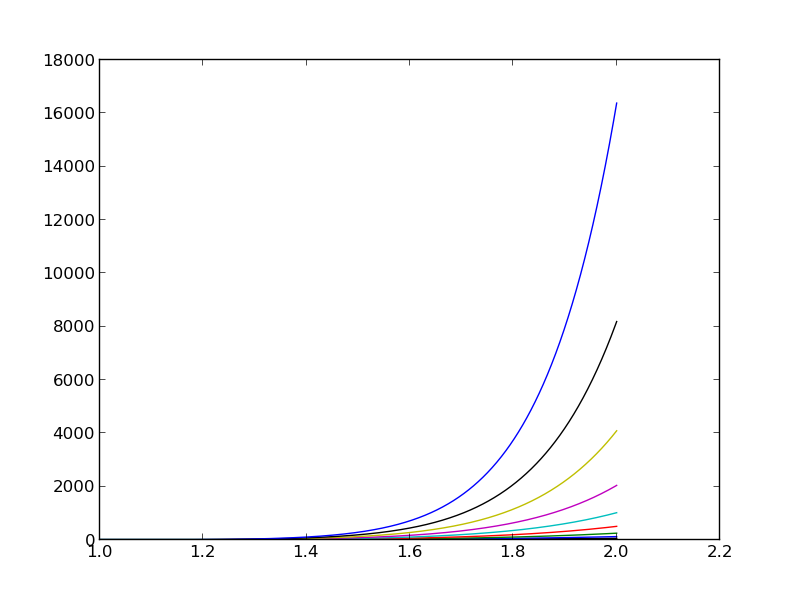

Finite-precision in numerical computations; results

The ill-conditioning is due to almost linearly dependent basis functions for large \( N \)

Ill-conditioning: general conclusions

Fourier series approximation; problem and code

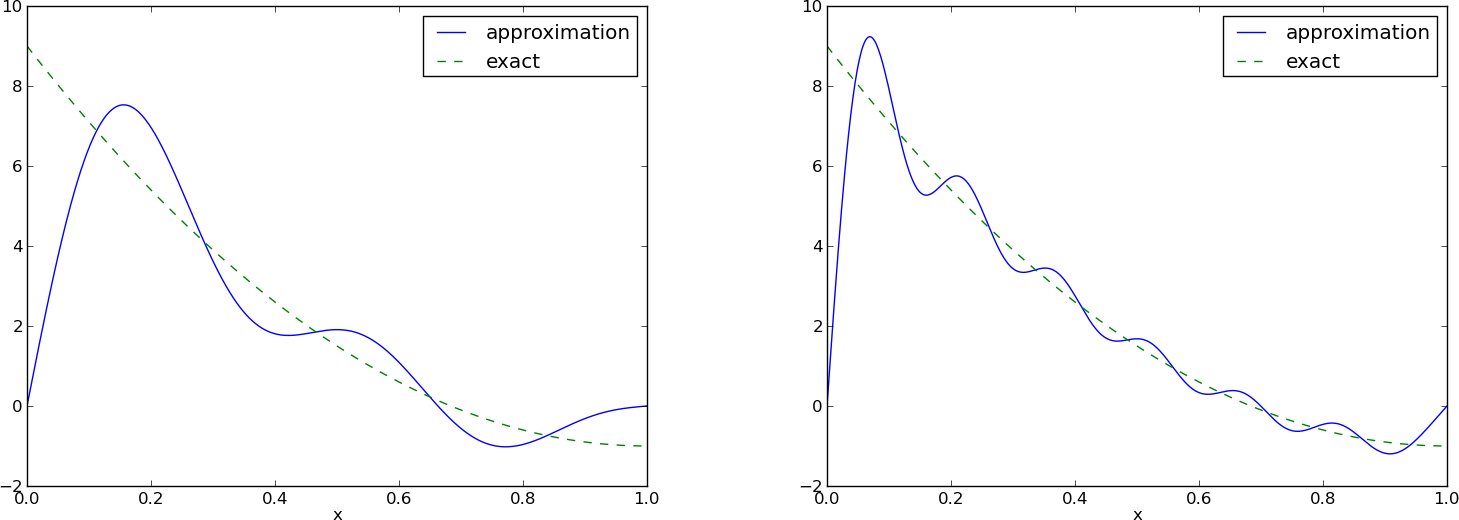

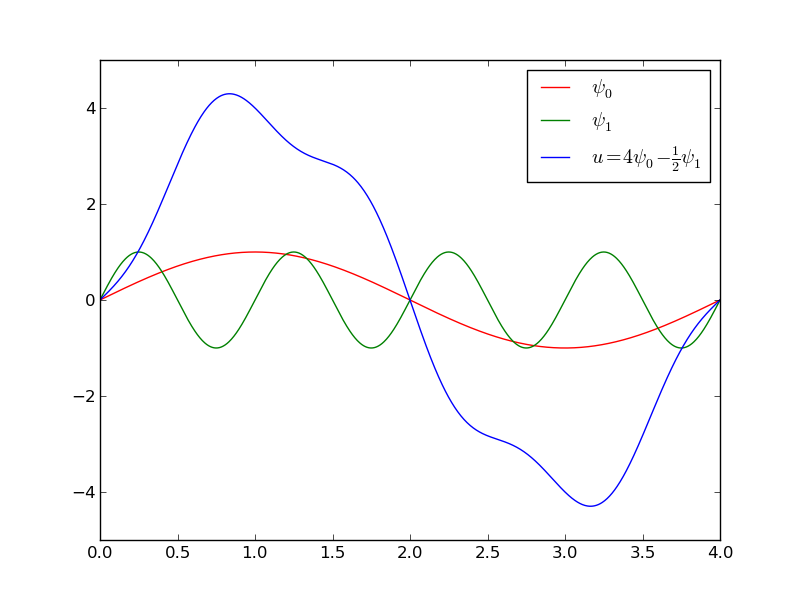

Fourier series approximation; plot

Fourier series approximation; improvements

Fourier series approximation; final results

Orthogonal basis functions

Function for the least squares method with orthogonal basis functions

Function for the least squares method with orthogonal basis functions; symbolic and numerical integration

The collocation or interpolation method; ideas and math

The collocation or interpolation method; implementation

The collocation or interpolation method; approximating a parabola by linear functions

The regression method

The regression method leads to an overdetermined linear system

A least squares method is used to solve overdetermined linear systems

Implementation

Example on using the regression method; code

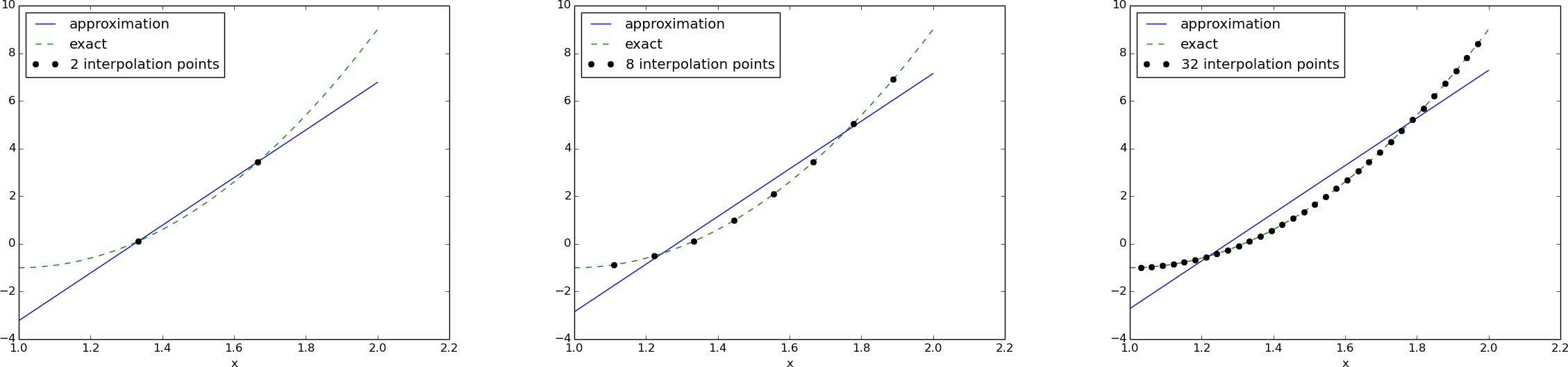

Example on using the regression method; result

What is the regression method used for?

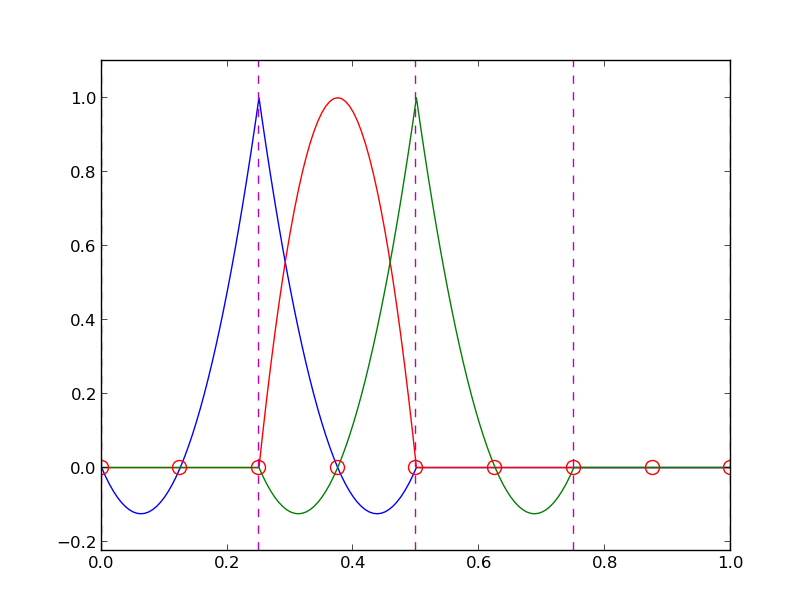

Lagrange polynomials; motivation and ideas

Lagrange polynomials; formula and code

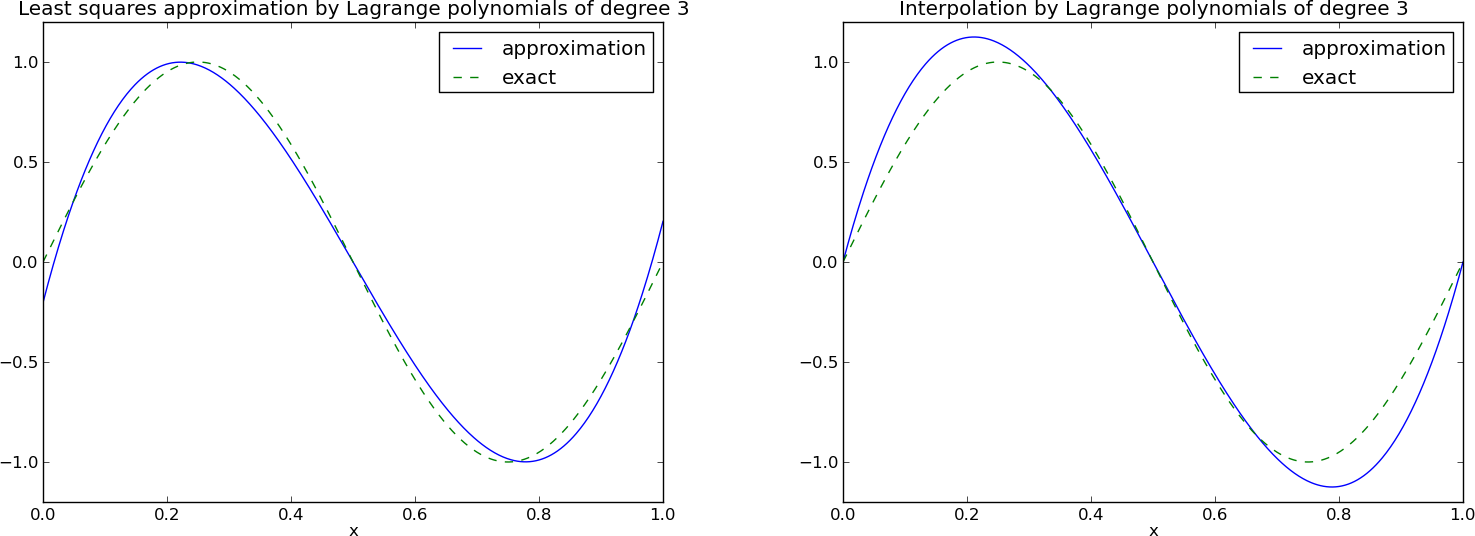

Lagrange polynomials; successful example

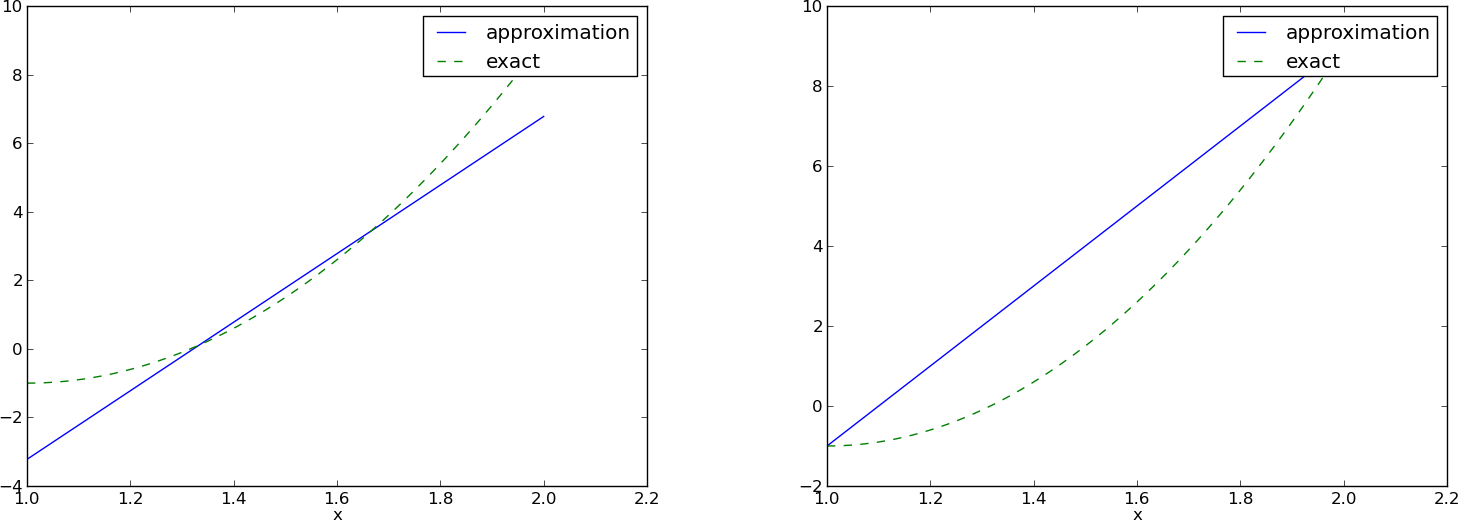

Lagrange polynomials; a less successful example

Lagrange polynomials; oscillatory behavior

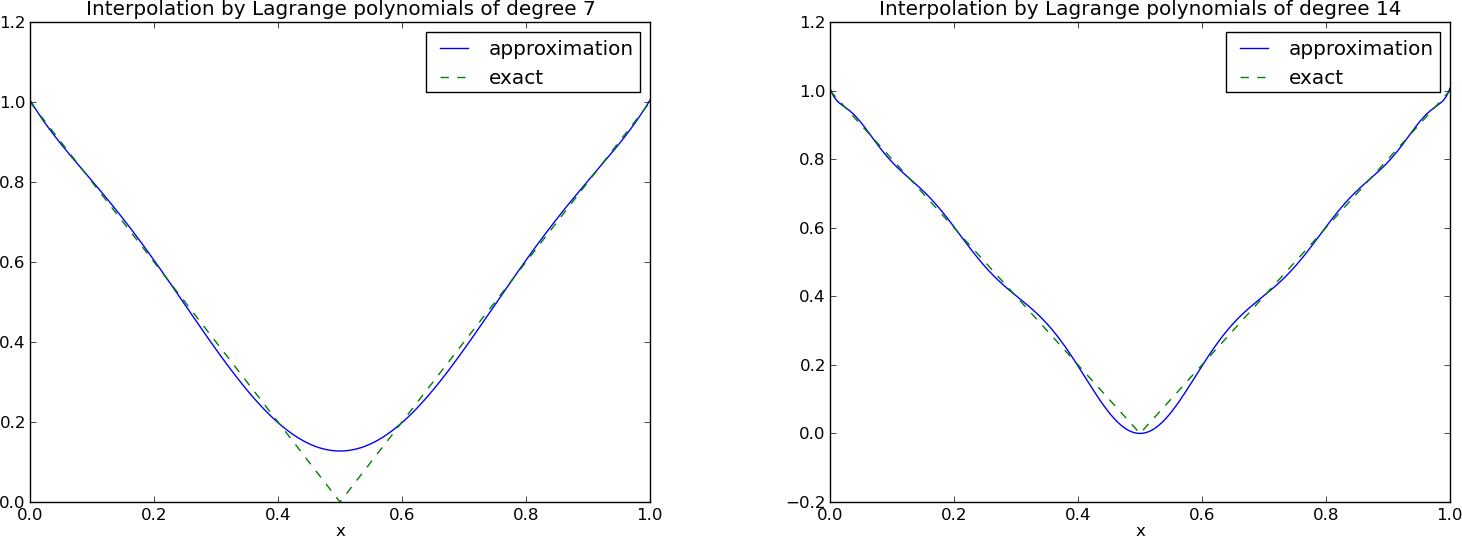

Lagrange polynomials; remedy for strong oscillations

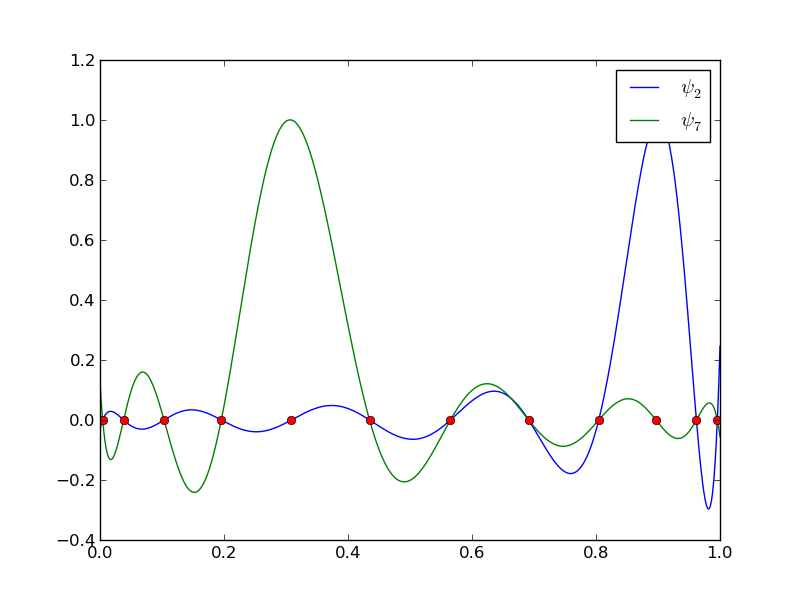

Lagrange polynomials; recalculation with Chebyshev nodes

Lagrange polynomials; less oscillations with Chebyshev nodes

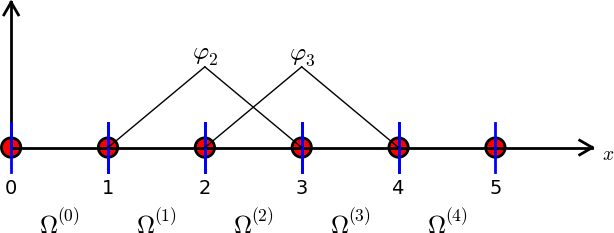

Finite element basis functions

The basis functions have so far been global: \( \baspsi_i(x) \neq 0 \) almost everywhere

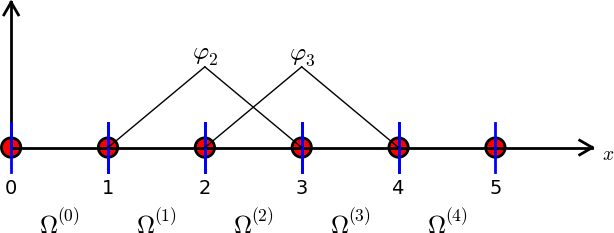

In the finite element method we use basis functions with local support

The linear combination of hat functions is a piecewise linear function

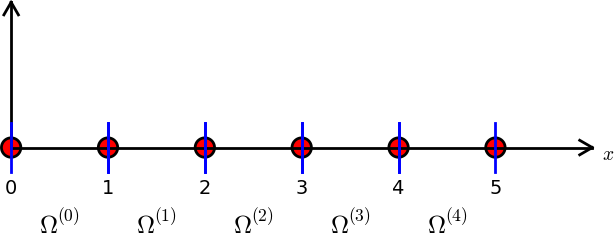

Elements and nodes

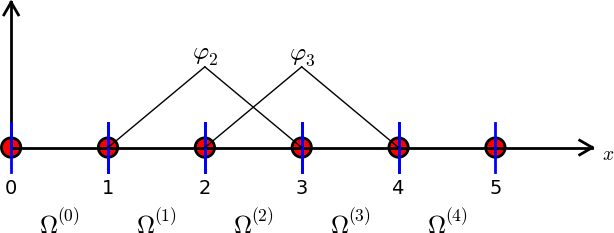

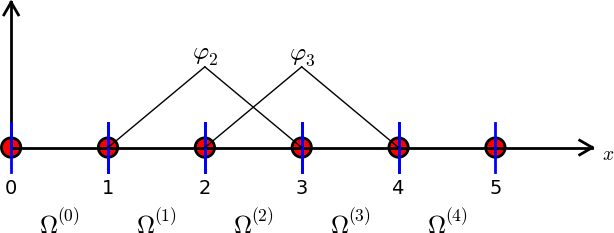

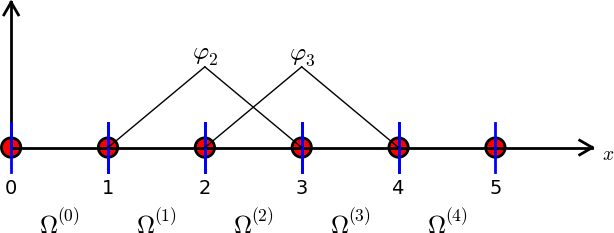

Example on elements with two nodes (P1 elements)

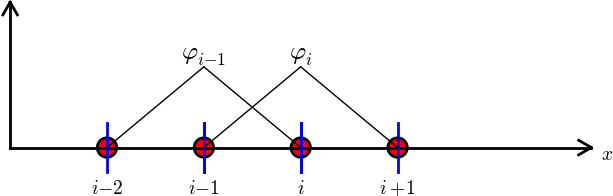

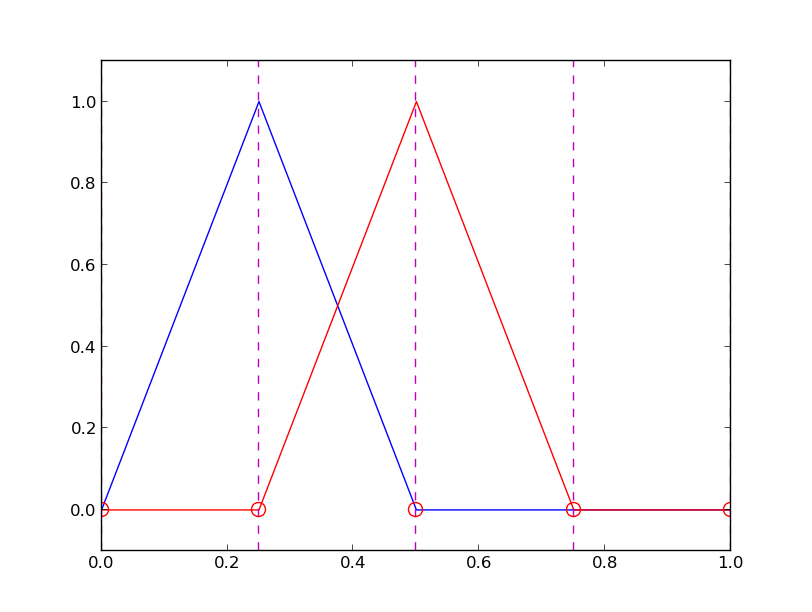

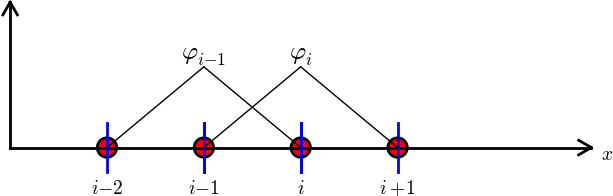

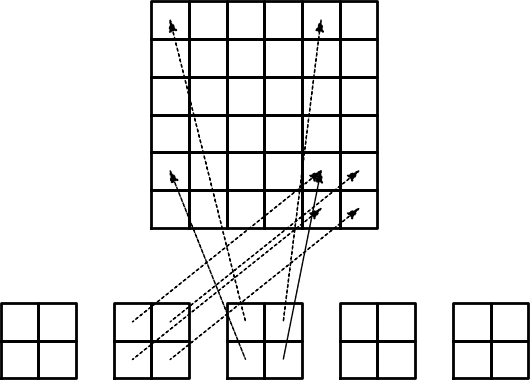

Illustration of two basis functions on the mesh

Example on elements with three nodes (P2 elements)

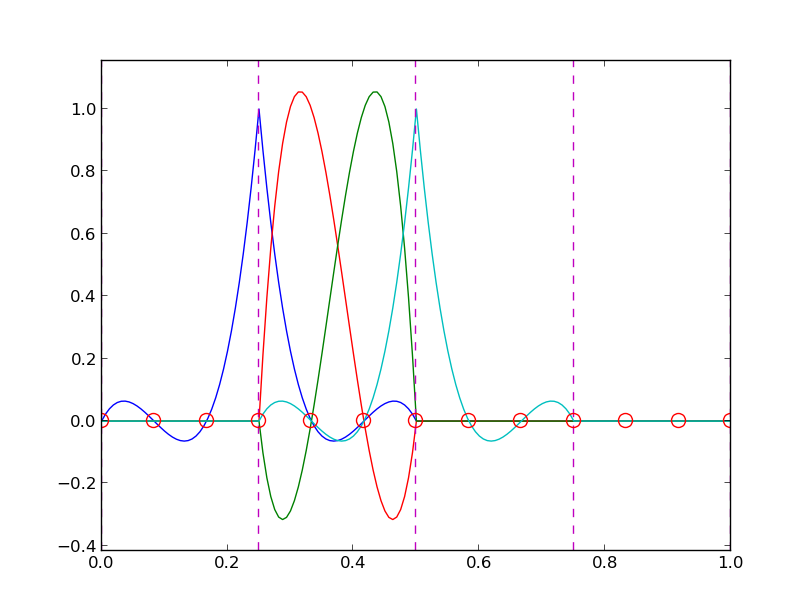

Some corresponding basis functions (P2 elements)

Examples on elements with four nodes (P3 elements)

Some corresponding basis functions (P3 elements)

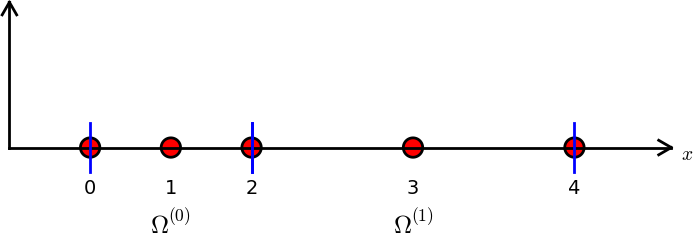

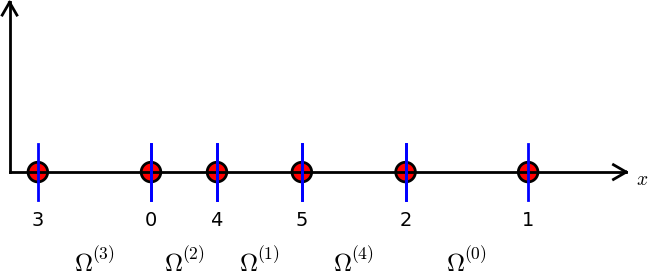

The numbering does not need to be regular from left to right

Interpretation of the coefficients \( c_i \)

Properties of the basis functions

How to construct quadratic \( \basphi_i \) (P2 elements)

Example on linear \( \basphi_i \) (P1 elements)

Example on cubic \( \basphi_i \) (P3 elements)

Calculating the linear system for \( c_i \)

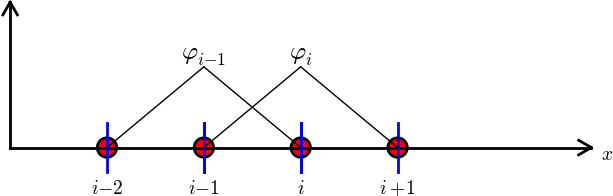

Computing a specific matrix entry (1)

Computing a specific matrix entry (2)

Calculating a general row in the matrix; figure

Calculating a general row in the matrix; details

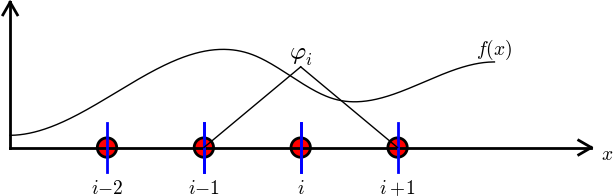

Calculation of the right-hand side

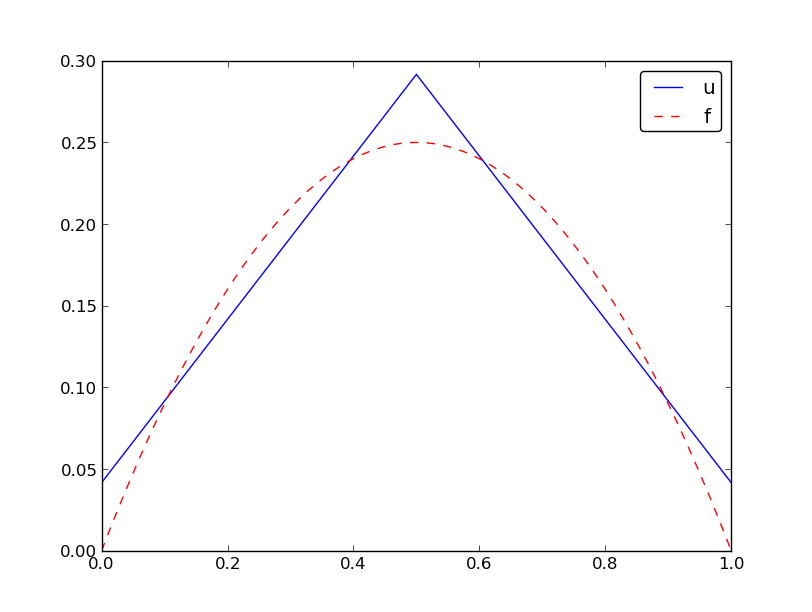

Specific example with two elements; linear system and solution

Specific example with two elements; plot

Specific example with four elements; plot

Specific example: what about P2 elements?

Assembly of elementwise computations

Split the integrals into elementwise integrals

The element matrix and local vs global node numbers

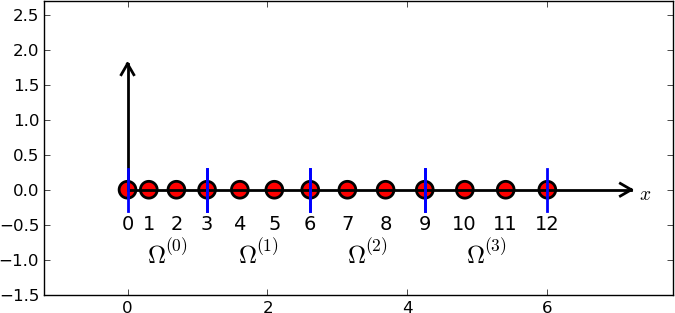

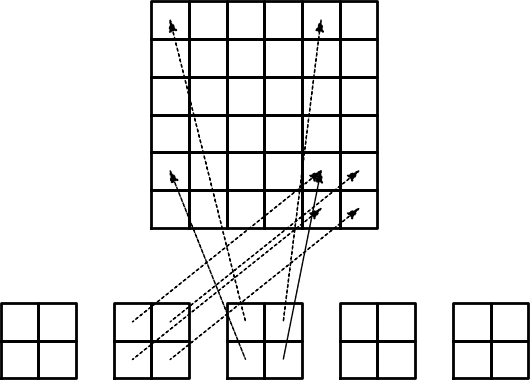

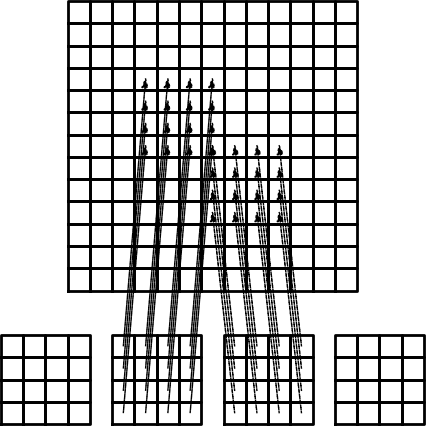

Illustration of the matrix assembly: regularly numbered P1 elements

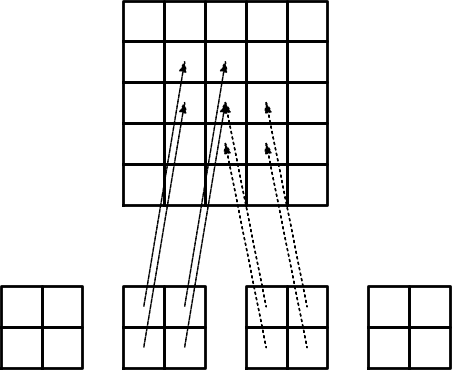

Illustration of the matrix assembly: regularly numbered P3 elements

Illustration of the matrix assembly: irregularly numbered P1 elements

Assembly of the right-hand side

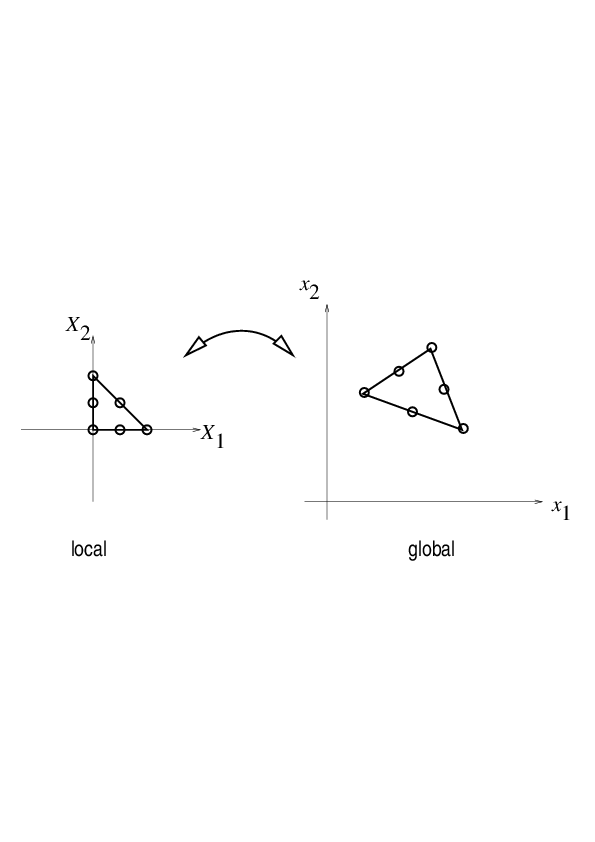

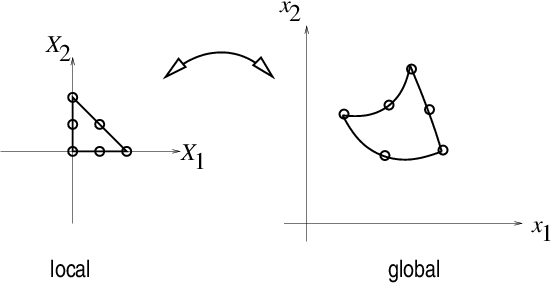

Mapping to a reference element

We use affine mapping: linear stretch of \( X\in [-1,1] \) to \( x\in [x_L,x_R] \)

Integral transformation

Advantages of the reference element

Standardized basis functions for P1 elements

Standardized basis functions for P2 elements

How to find the polynomial expressions?

Integration over a reference element; element matrix

Integration over a reference element; element vector

Tedious calculations! Let's use symbolic software

Implementation

Compute finite element basis functions in the reference element

Compute the element matrix

Example on symbolic vs numeric element matrix

Compute the element vector

Fallback on numerical integration if symbolic integration of \( \int f\refphi_r dx \) fails

Linear system assembly and solution

Linear system solution

Example on computing symbolic approximations

Example on computing numerical approximations

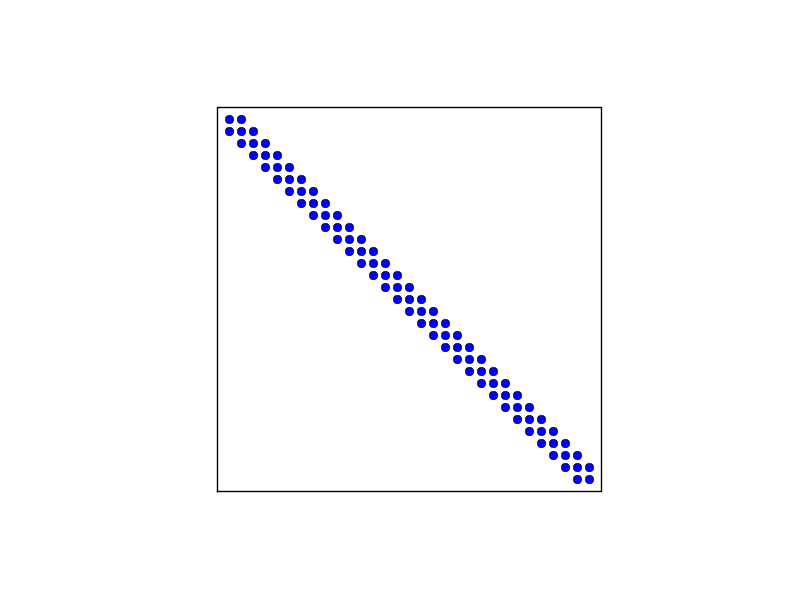

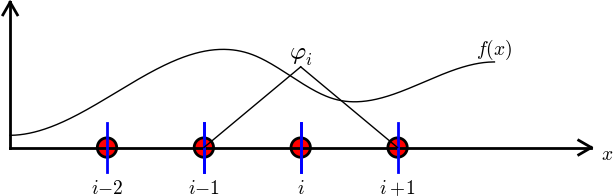

The structure of the coefficient matrix

General result: the coefficient matrix is sparse

Exemplifying the sparsity for P2 elements

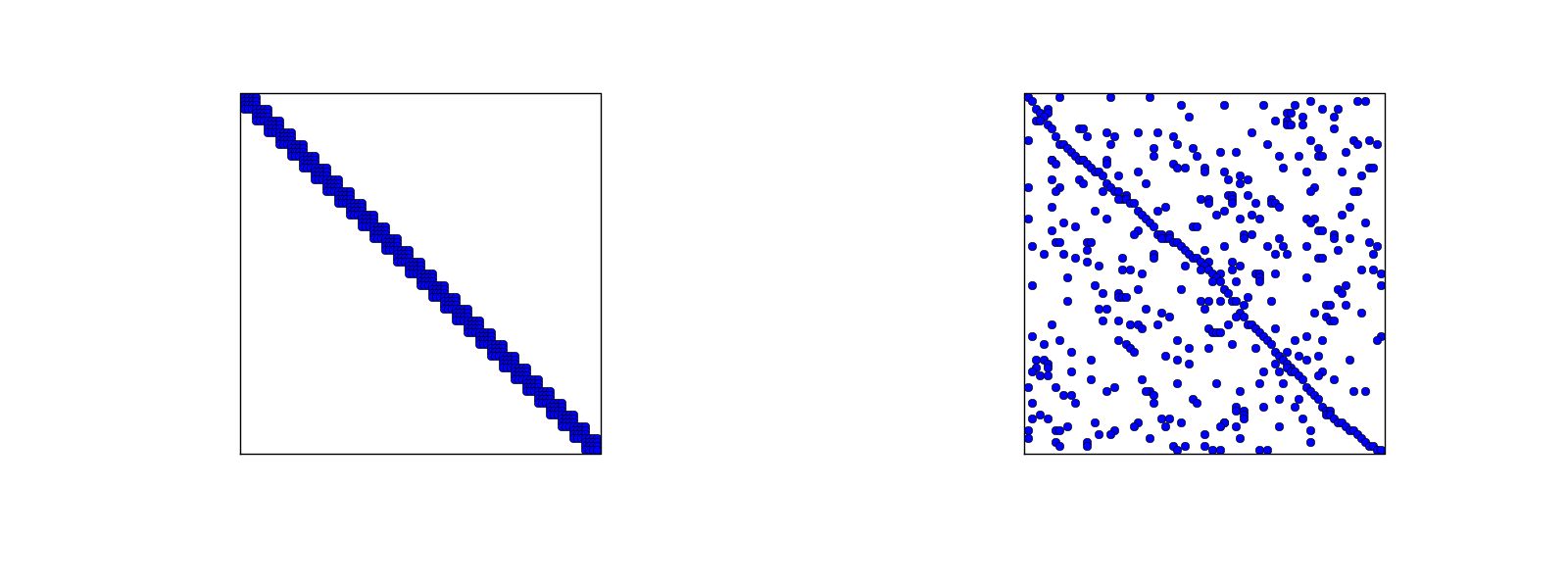

Matrix sparsity pattern for regular/random numbering of P1 elements

Matrix sparsity pattern for regular/random numbering of P3 elements

Sparse matrix storage and solution

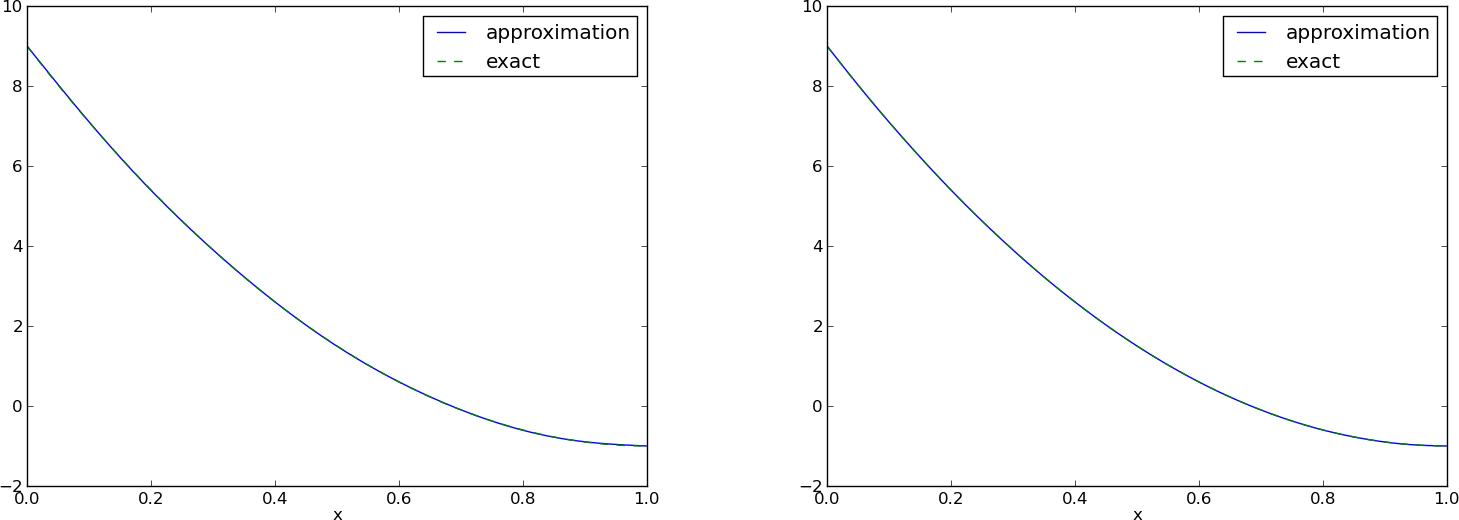

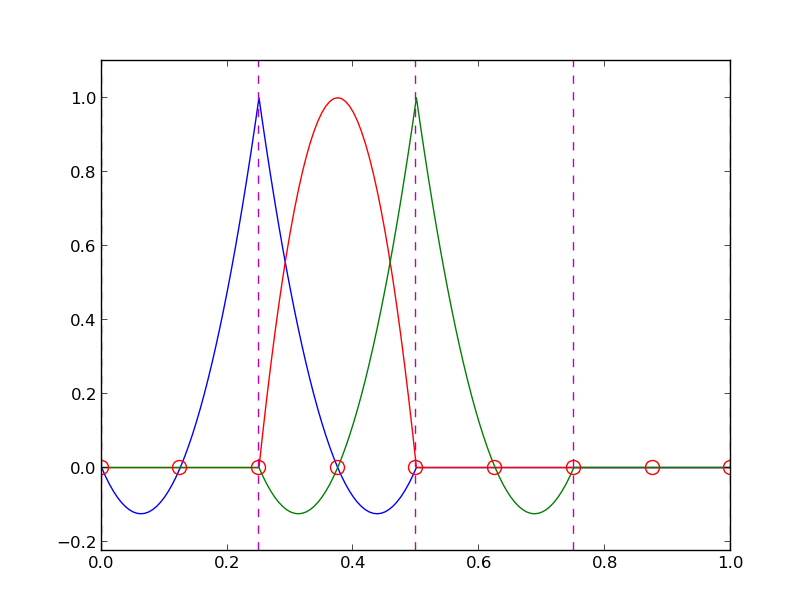

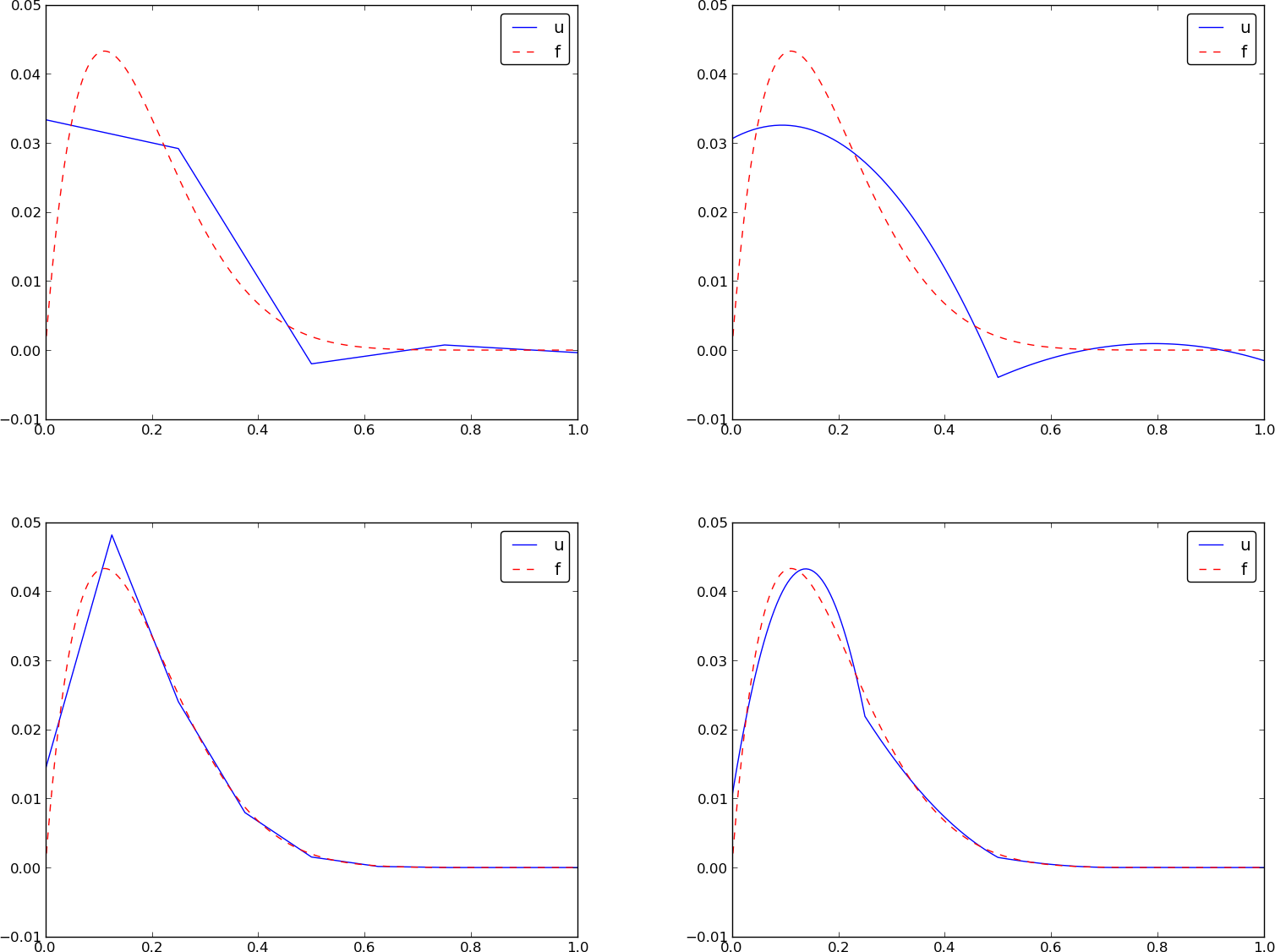

Approximate \( f\sim x^9 \) by various elements; code

Approximate \( f\sim x^9 \) by various elements; plot

Comparison of finite element and finite difference approximation

Interpolation/collocation with finite elements

Galerkin/project and least squares vs collocation/interpolation or finite differences

Expressing the left-hand side in finite difference operator notation

Treating the right-hand side; Trapezoidal rule

Treating the right-hand side; Simpson's rule

Finite element approximation vs finite differences

Making finite elements behave as finite differences

Limitations of the nodes and element concepts

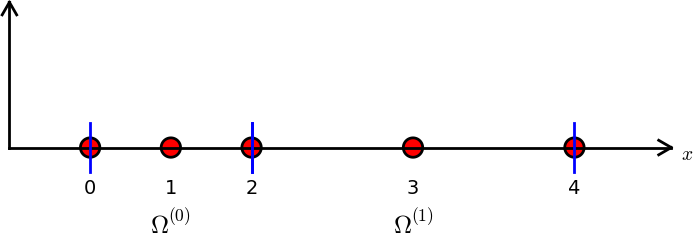

The generalized element concept has cells, vertices, nodes, and degrees of freedom

The concept of a finite element

Basic data structures: vertices, cells, dof_map

Example: data structures for P2 elements

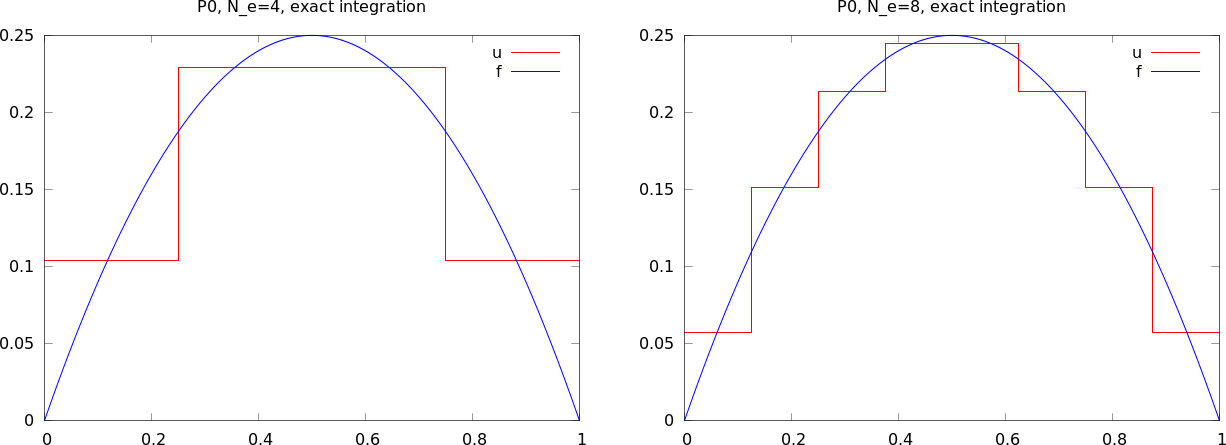

Example: P0 elements

A program with the fundamental algorithmic steps

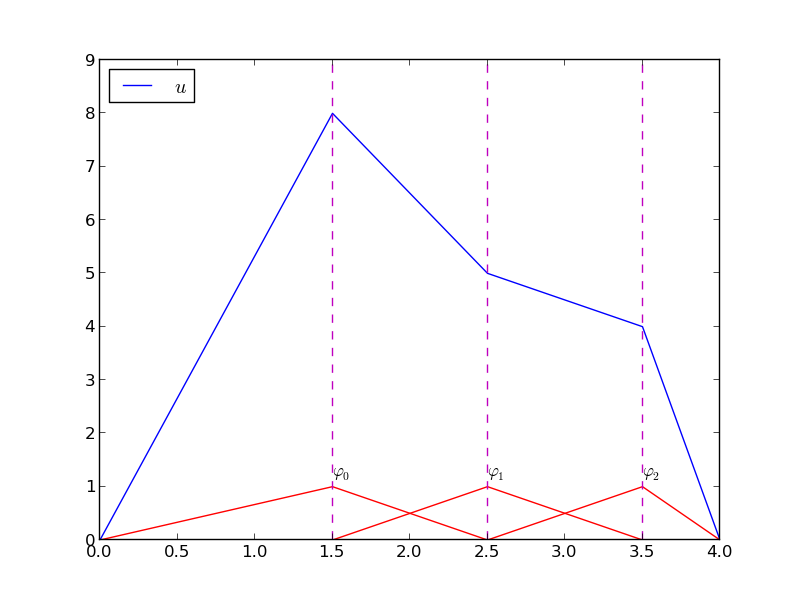

Approximating a parabola by P0 elements

Computing the error of the approximation; principles

Computing the error of the approximation; details

How does the error depend on \( h \) and \( d \)?

Cubic Hermite polynomials; definition

Cubic Hermite polynomials; derivation

Cubic Hermite polynomials; result

Numerical integration

Common form of a numerical integration rule

The Midpoint rule

Newton-Cotes rules apply the nodes

Gauss-Legendre rules apply optimized points

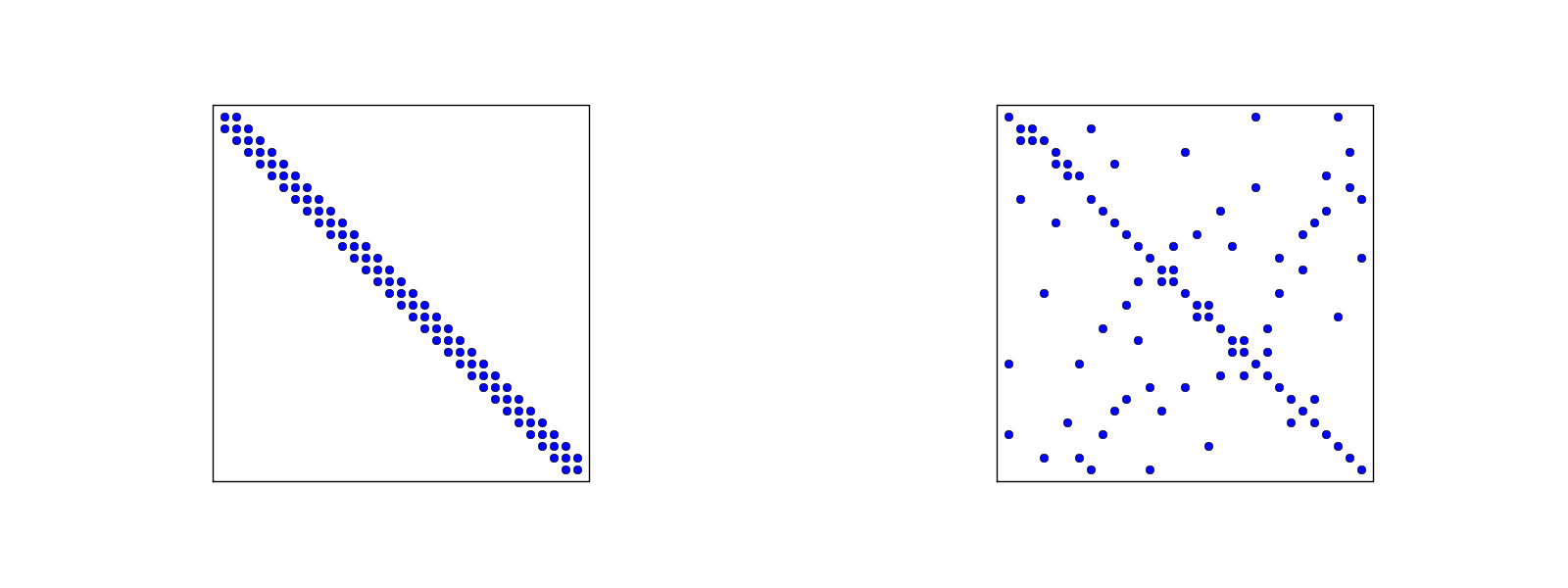

Approximation of functions in 2D

Quick overview of the 2D case

2D basis functions as tensor products of 1D functions

Tensor products

Double or single index?

Example on 2D (bilinear) basis functions; formulas

Example on 2D (bilinear) basis functions; plot

Implementation; principal changes to the 1D code

Implementation; 2D integration

Implementation; 2D basis functions

Implementation; application

Implementation; trying a perfect expansion

Generalization to 3D

Finite elements in 2D and 3D

Examples on cell types

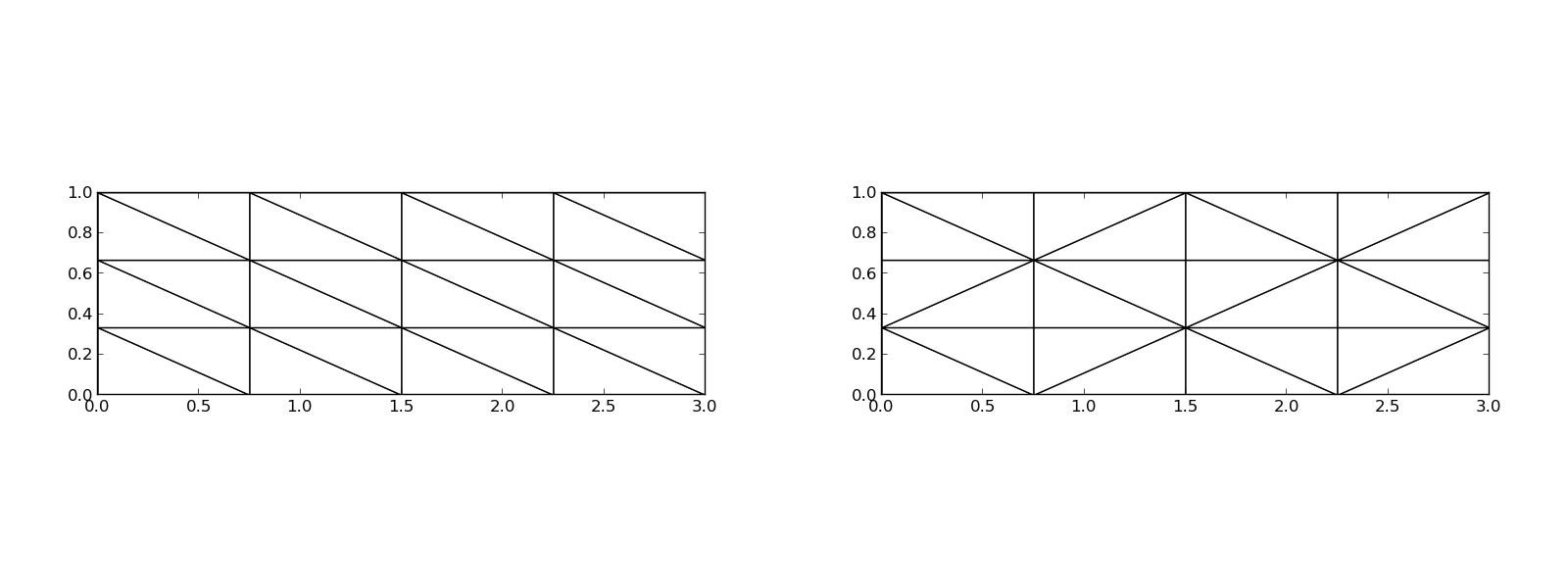

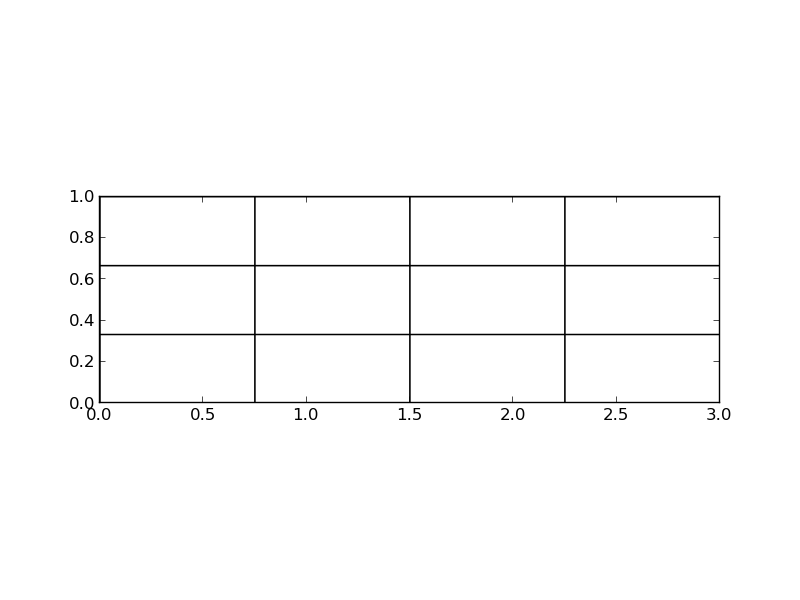

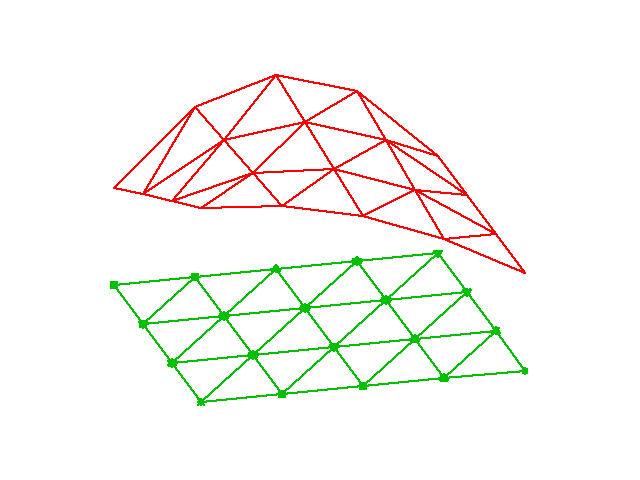

Rectangular domain with 2D P1 elements

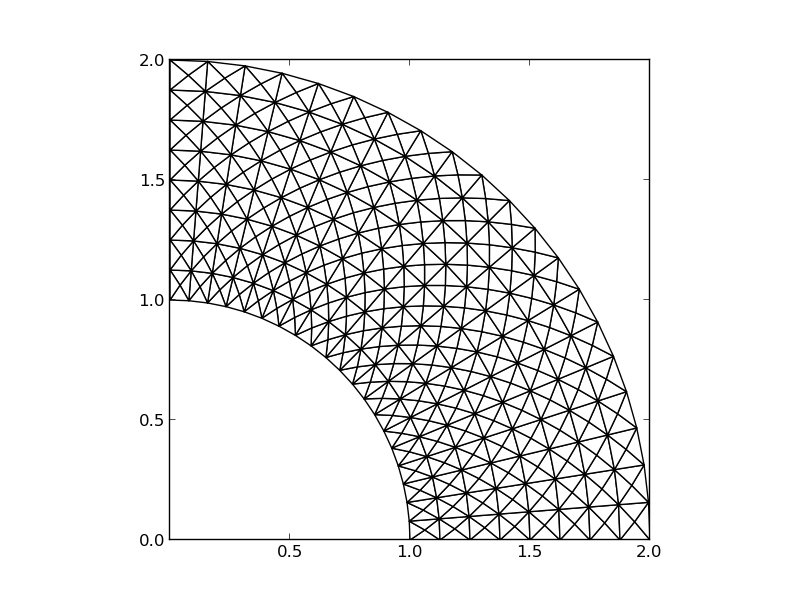

Deformed geometry with 2D P1 elements

Rectangular domain with 2D Q1 elements

Basis functions over triangles in the physical domain

Basic features of 2D elements

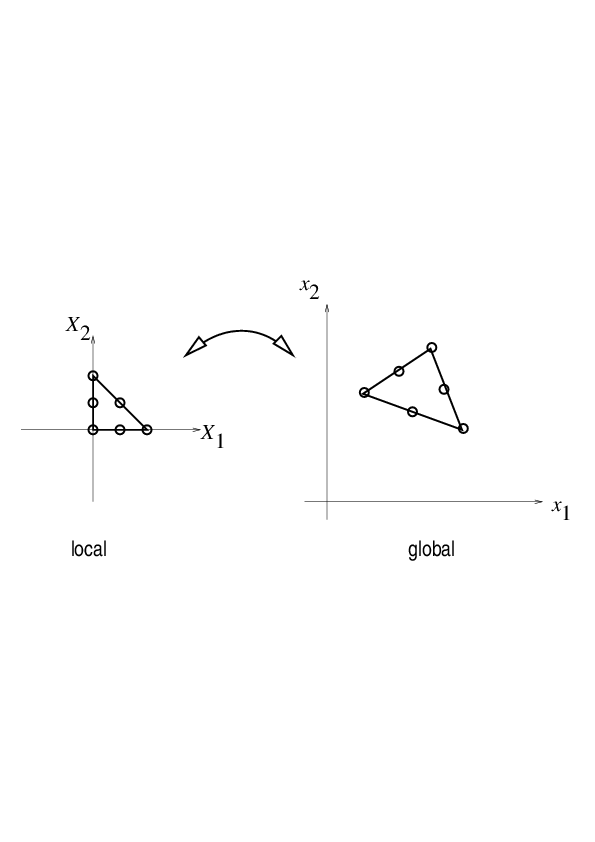

Linear mapping of reference element onto general triangular cell

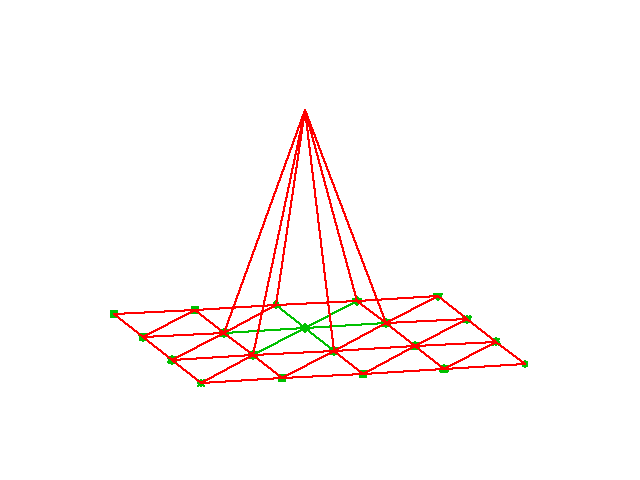

\( \basphi_i \): pyramid shape, composed of planes

Element matrices and vectors

Basis functions over triangles in the reference cell

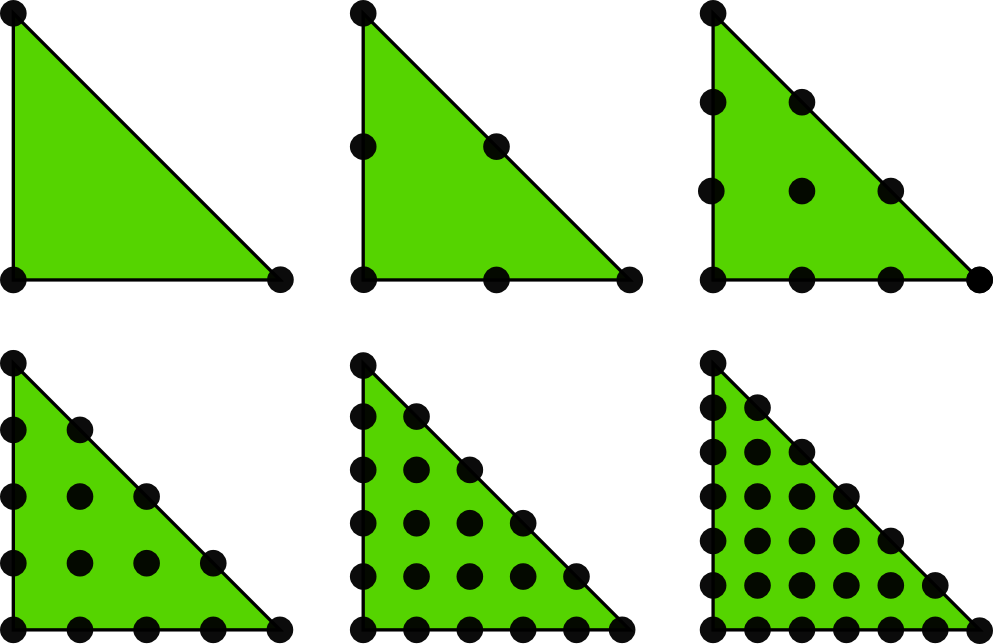

2D P1, P2, P3, P4, P5, and P6 elements

P1 elements in 1D, 2D, and 3D

P2 elements in 1D, 2D, and 3D

Affine mapping of the reference cell; formula

Affine mapping of the reference cell; figure

Isoparametric mapping of the reference cell

Computing integrals

Remark on going from 1D to 2D/3D

The finite element method has many concepts and a jungle of details. This learning strategy minimizes the mixing of ideas, concepts, and technical details.

General idea of finding an approximation \( u(x) \) to some given \( f(x) \): $$ u(x) = \sum_{i=0}^N c_i\baspsi_i(x) $$

where

We shall address three approaches:

Our mathematical framework for doing this is phrased in a way such that it becomes easy to understand and use the FEniCS software package for finite element computing.

Given a vector \( \f = (3,5) \), find an approximation to \( \f \) directed along a given line.

Observation to be used later: the vanishing derivative \eqref{fem:vec:dEdc0:v1} can be alternatively written as $$ (\e, \psib_0) = 0 $$

Given a vector \( \f \), find an approximation \( \u\in V \): $$ \begin{equation*} V = \hbox{span}\,\{\psib_0,\ldots,\psib_N\} \end{equation*} $$

We have a set of linearly independent basis vectors \( \psib_0,\ldots,\psib_N \). Any \( \u\in V \) can then be written as $$ \u = \sum_{j=0}^Nc_j\psib_j$$

Idea: find \( c_0,\ldots,c_N \) such that \( E= ||\e||^2 \) is minimized, \( \e=\f-\u \). $$ \begin{align*} E(c_0,\ldots,c_N) &= (\e,\e) = (\f -\sum_jc_j\psib_j,\f -\sum_jc_j\psib_j) \nonumber\\ &= (\f,\f) - 2\sum_{j=0}^Nc_j(\f,\psib_j) + \sum_{p=0}^N\sum_{q=0}^N c_pc_q(\psib_p,\psib_q) \end{align*} $$ $$ \begin{equation*} \frac{\partial E}{\partial c_i} = 0,\quad i=0,\ldots,N \end{equation*} $$

After some work we end up with a linear system $$ \begin{align} \sum_{j=0}^N A_{i,j}c_j &= b_i,\quad i=0,\ldots,N \label{_auto1}\\ A_{i,j} &= (\psib_i,\psib_j) \label{_auto2}\\ b_i &= (\psib_i, \f) \label{_auto3} \end{align} $$

Can be shown that minimizing \( ||\e|| \) implies that \( \e \) is orthogonal to all \( \v\in V \): $$ (\e,\v)=0,\quad \forall\v\in V $$ which implies that \( \e \) most be orthogonal to each basis vector: $$ (\e,\psib_i)=0,\quad i=0,\ldots,N $$

This orthogonality condition is the principle of the projection (or Galerkin) method. Leads to the same linear system as in the least squares method.

Let \( V \) be a function space spanned by a set of basis functions \( \baspsi_0,\ldots,\baspsi_N \), $$ \begin{equation*} V = \hbox{span}\,\{\baspsi_0,\ldots,\baspsi_N\} \end{equation*} $$

Find \( u\in V \) as a linear combination of the basis functions: $$ u = \sum_{j\in\If} c_j\baspsi_j,\quad\If = \{0,1,\ldots,N\} $$

As in the vector case, minimize the (square) norm of the error, \( E \), with respect to the coefficients \( c_j \), \( j\in\If \): $$ E = (e,e) = (f-u,f-u) = \left(f(x)-\sum_{j\in\If} c_j\baspsi_j(x), f(x)-\sum_{j\in\If} c_j\baspsi_j(x)\right) $$ $$ \frac{\partial E}{\partial c_i} = 0,\quad i=\in\If $$

But what is the scalar product when \( \baspsi_i \) is a function? $$(f,g) = \int_\Omega f(x)g(x)\, dx$$ (natural extension from Eucledian product \( (\u, \v) = \sum_j u_jv_j \))

The computations are identical to the vector case, and consequently we get a linear system $$ \sum_{j\in\If} A_{i,j}c_j = b_i,\ i\in\If,\quad A_{i,j} = (\baspsi_i,\baspsi_j),\ b_i = (f,\baspsi_i) $$

As before, minimizing \( (e,e) \) is equivalent to $$ (e,\baspsi_i)=0,\quad i\in\If \label{fem:approx:Galerkin0} $$

which is equivalent to $$ (e,v)=0,\quad\forall v\in V \label{fem:approx:Galerkin} $$ which is the projection (or Galerkin) method.

The algebra is the same as in the multi-dimensional vector case, and we get the same linear system as arose from the least squares method.

Approximate a parabola \( f(x) = 10(x-1)^2 - 1 \) by a straight line.

Consider symbolic computation of the linear system, where

sympy expression f (involving

the symbol x),psi is a list of \( \sequencei{\baspsi} \),Omega is a 2-tuple/list holding the domain \( \Omega \)

import sympy as sym

def least_squares(f, psi, Omega):

N = len(psi) - 1

A = sym.zeros((N+1, N+1))

b = sym.zeros((N+1, 1))

x = sym.Symbol('x')

for i in range(N+1):

for j in range(i, N+1):

A[i,j] = sym.integrate(psi[i]*psi[j],

(x, Omega[0], Omega[1]))

A[j,i] = A[i,j]

b[i,0] = sym.integrate(psi[i]*f, (x, Omega[0], Omega[1]))

c = A.LUsolve(b)

u = 0

for i in range(len(psi)):

u += c[i,0]*psi[i]

return u, c

Observe: symmetric coefficient matrix so we can halve the integrations.

sym.integrate fails, it returns an sym.Integral object.

We can test on this object and fall back on numerical integration.symbolic to explicitly choose

between symbolic and numerical computing.

def least_squares(f, psi, Omega, symbolic=True):

...

for i in range(N+1):

for j in range(i, N+1):

integrand = psi[i]*psi[j]

if symbolic:

I = sym.integrate(integrand, (x, Omega[0], Omega[1]))

if not symbolic or isinstance(I, sym.Integral):

# Could not integrate symbolically,

# fall back on numerical integration

integrand = sym.lambdify([x], integrand)

I = sym.mpmath.quad(integrand, [Omega[0], Omega[1]])

A[i,j] = A[j,i] = I

integrand = psi[i]*f

if symbolic:

I = sym.integrate(integrand, (x, Omega[0], Omega[1]))

if not symbolic or isinstance(I, sym.Integral):

integrand = sym.lambdify([x], integrand)

I = sym.mpmath.quad(integrand, [Omega[0], Omega[1]])

b[i,0] = I

...

Compare \( f \) and \( u \) visually:

def comparison_plot(f, u, Omega, filename='tmp.pdf'):

x = sym.Symbol('x')

# Turn f and u to ordinary Python functions

f = sym.lambdify([x], f, modules="numpy")

u = sym.lambdify([x], u, modules="numpy")

resolution = 401 # no of points in plot

xcoor = linspace(Omega[0], Omega[1], resolution)

exact = f(xcoor)

approx = u(xcoor)

plot(xcoor, approx)

hold('on')

plot(xcoor, exact)

legend(['approximation', 'exact'])

savefig(filename)

All code in module approx1D.py

>>> from approx1D import *

>>> x = sym.Symbol('x')

>>> f = 10*(x-1)**2-1

>>> u, c = least_squares(f=f, psi=[1, x], Omega=[1, 2])

>>> comparison_plot(f, u, Omega=[1, 2])

>>> from approx1D import *

>>> x = sym.Symbol('x')

>>> f = 10*(x-1)**2-1

>>> u, c = least_squares(f=f, psi=[1, x, x**2], Omega=[1, 2])

>>> print u

10*x**2 - 20*x + 9

>>> print sym.expand(f)

10*x**2 - 20*x + 9

least_squares is \( c_i=0 \) for \( i>2 \)If \( f\in V \), least squares and projection/Galerkin give \( u=f \).

If \( f\in V \), \( f=\sum_{j\in\If}d_j\baspsi_j \), for some \( \sequencei{d} \). Then $$ \begin{equation*} b_i = (f,\baspsi_i) = \sum_{j\in\If}d_j(\baspsi_j, \baspsi_i) = \sum_{j\in\If} d_jA_{i,j} \end{equation*} $$ The linear system \( \sum_j A_{i,j}c_j = b_i \), \( i\in\If \), is then $$ \begin{equation*} \sum_{j\in\If}c_jA_{i,j} = \sum_{j\in\If}d_jA_{i,j},\quad i\in\If \end{equation*} $$ which implies that \( c_i=d_i \) for \( i\in\If \) and \( u \) is identical to \( f \).

The previous computations were symbolic. What if we solve the linear system numerically with standard arrays?

That is, \( f \) is parabola, but we approximate with $$ u(x) = c_0 + c_1x + c_2x^2 + c_3x^3 +\cdots + c_Nx^N $$

We expect \( c_2=c_3=\cdots=c_N=0 \) since \( f\in V \) implies \( u=f \).

Will we get this result with finite precision computer arithmetic?

| exact | sympy | numpy32 | numpy64 |

|---|---|---|---|

| 9 | 9.62 | 5.57 | 8.98 |

| -20 | -23.39 | -7.65 | -19.93 |

| 10 | 17.74 | -4.50 | 9.96 |

| 0 | -9.19 | 4.13 | -0.26 |

| 0 | 5.25 | 2.99 | 0.72 |

| 0 | 0.18 | -1.21 | -0.93 |

| 0 | -2.48 | -0.41 | 0.73 |

| 0 | 1.81 | -0.013 | -0.36 |

| 0 | -0.66 | 0.08 | 0.11 |

| 0 | 0.12 | 0.04 | -0.02 |

| 0 | -0.001 | -0.02 | 0.002 |

matrix and lu_solve from sympy.mpmath.fpnumpy matrix with 4-byte floatsnumpy matrix with 8-byte floats

Let's approximate \( f \) by a typical Fourier series expansion $$ u(x) = \sum_i a_i\sin i\pi x = \sum_{j=0}^Nc_j\sin((j+1)\pi x) $$

which means that $$ \begin{equation*} V = \hbox{span}\,\{ \sin \pi x, \sin 2\pi x,\ldots,\sin (N+1)\pi x\} \end{equation*} $$

Computations using the least_squares function:

N = 3

from sympy import sin, pi

psi = [sin(pi*(i+1)*x) for i in range(N+1)]

f = 10*(x-1)**2 - 1

Omega = [0, 1]

u, c = least_squares(f, psi, Omega)

comparison_plot(f, u, Omega)

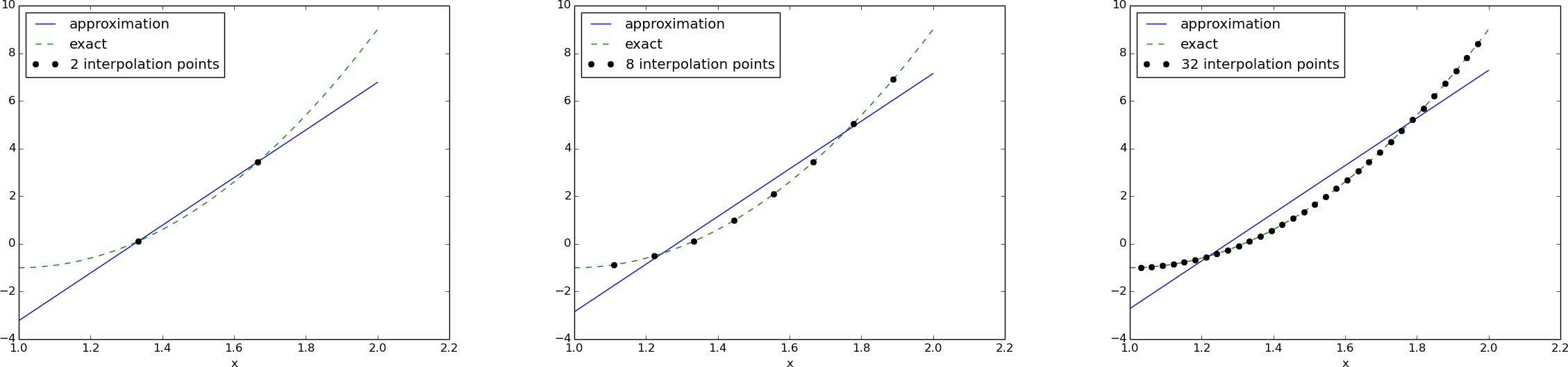

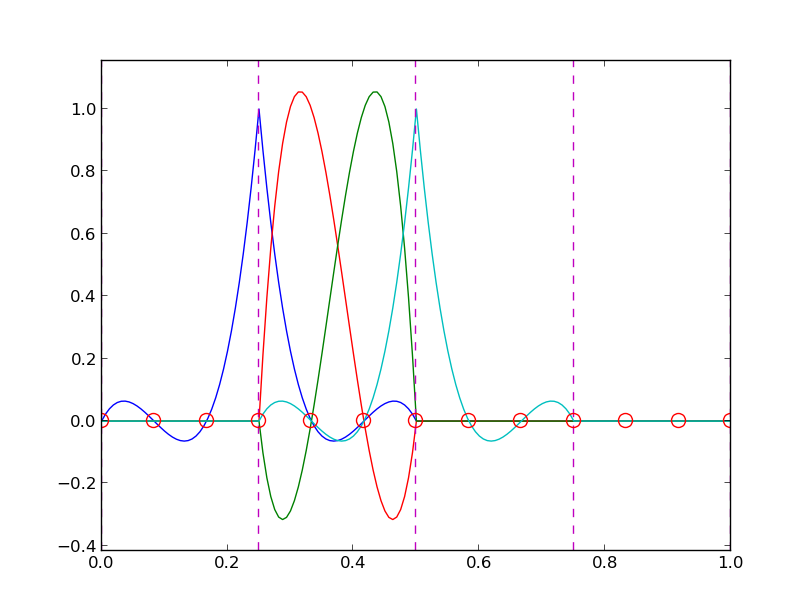

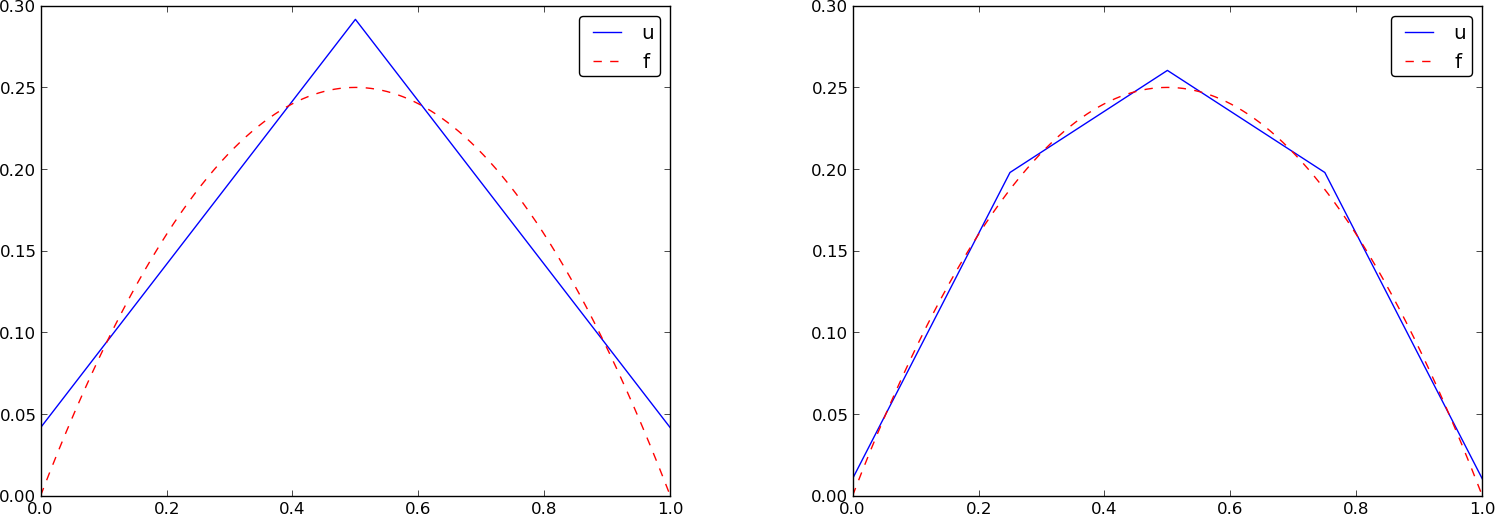

Left: \( N=3 \), right: \( N=11 \):

All \( \baspsi_i(0)=0 \) and hence \( u(0)=0 \neq f(0)=9 \). Similar problem at \( x=1 \). The boundary values of \( u \) are always wrong!

\( N=3 \) vs \( N=11 \):

This choice of sine functions as basis functions is popular because

def least_squares_orth(f, psi, Omega):

N = len(psi) - 1

A = [0]*(N+1)

b = [0]*(N+1)

x = sym.Symbol('x')

for i in range(N+1):

A[i] = sym.integrate(psi[i]**2, (x, Omega[0], Omega[1]))

b[i] = sym.integrate(psi[i]*f, (x, Omega[0], Omega[1]))

c = [b[i]/A[i] for i in range(len(b))]

u = 0

for i in range(len(psi)):

u += c[i]*psi[i]

return u, c

Extensions:

symbolic argument).sym.Integral is returned from sym.integrate).

def least_squares_orth(f, psi, Omega, symbolic=True):

...

for i in range(N+1):

# Diagonal matrix term

A[i] = sym.integrate(psi[i]**2, (x, Omega[0], Omega[1]))

# Right-hand side term

integrand = psi[i]*f

if symbolic:

I = sym.integrate(integrand, (x, Omega[0], Omega[1]))

if not symbolic or isinstance(I, sym.Integral):

print 'numerical integration of', integrand

integrand = sym.lambdify([x], integrand)

I = sym.mpmath.quad(integrand, [Omega[0], Omega[1]])

b[i] = I

...

Assumption above: \( \int_\Omega\basphi_i^2dx \) works symbolically (but there is no guarantee!)

Here is another idea for approximating \( f(x) \) by \( u(x)=\sum_jc_j\baspsi_j \):

This is a linear system with no need for integration: $$ \begin{align} \sum_{j\in\If} A_{i,j}c_j &= b_i,\quad i\in\If \label{_auto4}\\ A_{i,j} &= \baspsi_j(\xno{i}) \label{_auto5}\\ b_i &= f(\xno{i}) \label{_auto6} \end{align} $$

No symmetric matrix: \( \baspsi_j(\xno{i})\neq \baspsi_i(\xno{j}) \) in general

points holds the interpolation/collocation points

def interpolation(f, psi, points):

N = len(psi) - 1

A = sym.zeros((N+1, N+1))

b = sym.zeros((N+1, 1))

x = sym.Symbol('x')

# Turn psi and f into Python functions

psi = [sym.lambdify([x], psi[i]) for i in range(N+1)]

f = sym.lambdify([x], f)

for i in range(N+1):

for j in range(N+1):

A[i,j] = psi[j](points[i])

b[i,0] = f(points[i])

c = A.LUsolve(b)

u = 0

for i in range(len(psi)):

u += c[i,0]*psi[i](x)

return u

Overdetermined linear system: $$ u(\xno{i}) = \sum_{j\in\If} c_j \baspsi_j(\xno{i}) = f(\xno{i}), \quad i=0,1,\ldots,m $$ $$ \sum_{j\in\If} A_{i,j}c_j = b_i,\quad i=0,1,\ldots,m $$ $$ A_{i,j} = \baspsi_j(\xno{i}),\quad b_i = f(\xno{i})$$

def regression(f, psi, points):

N = len(psi) - 1

m = len(points)

# Use numpy arrays and numerical computing

B = np.zeros((N+1, N+1))

d = np.zeros(N+1)

# Wrap psi and f in Python functions rather than expressions

# so that we can evaluate psi at points[i]

x = sym.Symbol('x')

psi_sym = psi # save symbolic expression

psi = [sym.lambdify([x], psi[i]) for i in range(N+1)]

f = sym.lambdify([x], f)

for i in range(N+1):

for j in range(N+1):

B[i,j] = 0

for k in range(m+1):

B[i,j] += psi[i](points[k])*psi[j](points[k])

d[i] = 0

for k in range(m+1):

d[i] += psi[i](points[k])*f(points[k])

c = np.linalg.solve(B, d)

u = sum(c[i]*psi_sym[i] for i in range(N+1))

return u, c

import sympy as sym

x = sym.Symbol('x')

f = 10*(x-1)**2 - 1

psi = [1, x]

Omega = [1, 2]

m_values = [2-1, 8-1, 64-1]

# Create m+3 points and use the inner m+1 points

for m in m_values:

points = np.linspace(Omega[0], Omega[1], m+3)[1:-1]

u, c = regression(f, psi, points)

comparison_plot(f, u, Omega, points=points,

points_legend='%d interpolation points' % (m+1))

Motivation:

Hence, \( c_i = f(x_i) \) and $$ u(x) = \sum_{j\in\If} f(\xno{i})\baspsi_i(x) $$

def Lagrange_polynomial(x, i, points):

p = 1

for k in range(len(points)):

if k != i:

p *= (x - points[k])/(points[i] - points[k])

return p

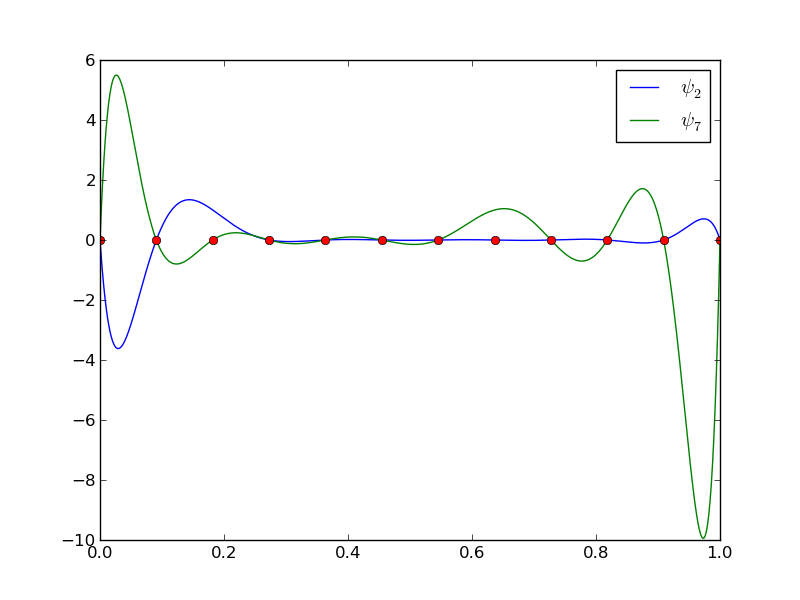

12 points, degree 11, plot of two of the Lagrange polynomials - note that they are zero at all points except one.

Problem: strong oscillations near the boundaries for larger \( N \) values.

The oscillations can be reduced by a more clever choice of interpolation points, called the Chebyshev nodes: $$ \xno{i} = \half (a+b) + \half(b-a)\cos\left( \frac{2i+1}{2(N+1)}\pi\right),\quad i=0\ldots,N $$ on an interval \( [a,b] \).

12 points, degree 11, plot of two of the Lagrange polynomials - note that they are zero at all points except one.

Split \( \Omega \) into \( N_e \) non-overlapping subdomains called elements: $$ \Omega = \Omega^{(0)}\cup \cdots \cup \Omega^{(N_e)} $$

On each element, introduce \( N_n \) points called nodes: \( \xno{0},\ldots,\xno{N_n-1} \)

Data structure: nodes holds coordinates or nodes, elements holds the

node numbers in each element

nodes = [0, 1.2, 2.4, 3.6, 4.8, 5]

elements = [[0, 1], [1, 2], [2, 3], [3, 4], [4, 5]]

nodes = [0, 0.125, 0.25, 0.375, 0.5, 0.625, 0.75, 0.875, 1.0]

elements = [[0, 1, 2], [2, 3, 4], [4, 5, 6], [6, 7, 8]]

d = 3 # d+1 nodes per element

num_elements = 4

num_nodes = num_elements*d + 1

nodes = [i*0.5 for i in range(num_nodes)]

elements = [[i*d+j for j in range(d+1)] for i in range(num_elements)]

nodes = [1.5, 5.5, 4.2, 0.3, 2.2, 3.1]

elements = [[2, 1], [4, 5], [0, 4], [3, 0], [5, 2]]

Important property: \( c_i \) is the value of \( u \) at node \( i \), \( \xno{i} \): $$ u(\xno{i}) = \sum_{j\in\If} c_j\basphi_j(\xno{i}) = c_i\basphi_i(\xno{i}) = c_i $$

because \( \basphi_j(\xno{i}) =0 \) if \( i\neq j \) and \( \basphi_i(\xno{i}) =1 \)

\( A_{2,3}=\int_\Omega\basphi_2\basphi_3 dx \): \( \basphi_2\basphi_3\neq 0 \) only over element 2. There, $$ \basphi_3(x) = (x-x_2)/h,\quad \basphi_2(x) = 1- (x-x_2)/h$$ $$ A_{2,3} = \int_\Omega \basphi_2\basphi_{3}\dx = \int_{\xno{2}}^{\xno{3}} \left(1 - \frac{x - \xno{2}}{h}\right) \frac{x - x_{2}}{h} \dx = \frac{h}{6} $$

Need a specific \( f(x) \) to do more...

Important observations:

Now,

i=elements[e][r])

Important observations:

Instead of computing $$ \begin{equation*} \tilde A^{(e)}_{r,s} = \int_{\Omega^{(e)}}\basphi_{q(e,r)}(x)\basphi_{q(e,s)}(x)dx = \int_{x_L}^{x_R}\basphi_{q(e,r)}(x)\basphi_{q(e,s)}(x)dx \end{equation*} $$ we now map \( [x_L, x_R] \) to a standardized reference element domain \( [-1,1] \) with local coordinate \( X \)

Reference element integration: just change integration variable from \( x \) to \( X \). Introduce local basis function $$ \refphi_r(X) = \basphi_{q(e,r)}(x(X)) $$ $$ \tilde A^{(e)}_{r,s} = \int_{\Omega^{(e)}}\basphi_{q(e,r)}(x)\basphi_{q(e,s)}(x)dx = \int\limits_{-1}^1 \refphi_r(X)\refphi_s(X)\underbrace{\frac{dx}{dX}}_{\det J = h/2}dX = \int\limits_{-1}^1 \refphi_r(X)\refphi_s(X)\det J\,dX $$ $$ \tilde b^{(e)}_{r} = \int_{\Omega^{(e)}}f(x)\basphi_{q(e,r)}(x)dx = \int\limits_{-1}^1 f(x(X))\refphi_r(X)\det J\,dX $$

Note: simple polynomial expressions (no need to consider piecewisely defined functions)

Easy to generalize to arbitrary order!

Three alternatives:

P1 elements and \( f(x)=x(1-x) \). $$ \begin{align} \tilde A^{(e)}_{0,0} &= \int_{-1}^1 \refphi_0(X)\refphi_0(X)\frac{h}{2} dX\nonumber\\ &=\int_{-1}^1 \half(1-X)\half(1-X) \frac{h}{2} dX = \frac{h}{8}\int_{-1}^1 (1-X)^2 dX = \frac{h}{3} \label{fem:approx:fe:intg:ref:Ae00}\\ \tilde A^{(e)}_{1,0} &= \int_{-1}^1 \refphi_1(X)\refphi_0(X)\frac{h}{2} dX\nonumber\\ &=\int_{-1}^1 \half(1+X)\half(1-X) \frac{h}{2} dX = \frac{h}{8}\int_{-1}^1 (1-X^2) dX = \frac{h}{6} \label{_auto10}\\ \tilde A^{(e)}_{0,1} &= \tilde A^{(e)}_{1,0} \label{fem:approx:fe:intg:ref:Ae10}\\ \tilde A^{(e)}_{1,1} &= \int_{-1}^1 \refphi_1(X)\refphi_1(X)\frac{h}{2} dX\nonumber\\ &=\int_{-1}^1 \half(1+X)\half(1+X) \frac{h}{2} dX = \frac{h}{8}\int_{-1}^1 (1+X)^2 dX = \frac{h}{3} \label{fem:approx:fe:intg:ref:Ae11} \end{align} $$

\( x_m \): element midpoint.

>>> import sympy as sym

>>> x, x_m, h, X = sym.symbols('x x_m h X')

>>> sym.integrate(h/8*(1-X)**2, (X, -1, 1))

h/3

>>> sym.integrate(h/8*(1+X)*(1-X), (X, -1, 1))

h/6

>>> x = x_m + h/2*X

>>> b_0 = sym.integrate(h/4*x*(1-x)*(1-X), (X, -1, 1))

>>> print b_0

-h**3/24 + h**2*x_m/6 - h**2/12 - h*x_m**2/2 + h*x_m/2

Can print out in LaTeX too (convenient for copying into reports):

>>> print sym.latex(b_0, mode='plain')

- \frac{1}{24} h^{3} + \frac{1}{6} h^{2} x_{m}

- \frac{1}{12} h^{2} - \half h x_{m}^{2}

+ \half h x_{m}

Let \( \refphi_r(X) \) be a Lagrange polynomial of degree d:

import sympy as sym

import numpy as np

def phi_r(r, X, d):

if isinstance(X, sym.Symbol):

h = sym.Rational(1, d) # node spacing

nodes = [2*i*h - 1 for i in range(d+1)]

else:

# assume X is numeric: use floats for nodes

nodes = np.linspace(-1, 1, d+1)

return Lagrange_polynomial(X, r, nodes)

def Lagrange_polynomial(x, i, points):

p = 1

for k in range(len(points)):

if k != i:

p *= (x - points[k])/(points[i] - points[k])

return p

def basis(d=1):

"""Return the complete basis."""

X = sym.Symbol('X')

phi = [phi_r(r, X, d) for r in range(d+1)]

return phi

def element_matrix(phi, Omega_e, symbolic=True):

n = len(phi)

A_e = sym.zeros((n, n))

X = sym.Symbol('X')

if symbolic:

h = sym.Symbol('h')

else:

h = Omega_e[1] - Omega_e[0]

detJ = h/2 # dx/dX

for r in range(n):

for s in range(r, n):

A_e[r,s] = sym.integrate(phi[r]*phi[s]*detJ, (X, -1, 1))

A_e[s,r] = A_e[r,s]

return A_e

>>> from fe_approx1D import *

>>> phi = basis(d=1)

>>> phi

[1/2 - X/2, 1/2 + X/2]

>>> element_matrix(phi, Omega_e=[0.1, 0.2], symbolic=True)

[h/3, h/6]

[h/6, h/3]

>>> element_matrix(phi, Omega_e=[0.1, 0.2], symbolic=False)

[0.0333333333333333, 0.0166666666666667]

[0.0166666666666667, 0.0333333333333333]

def element_vector(f, phi, Omega_e, symbolic=True):

n = len(phi)

b_e = sym.zeros((n, 1))

# Make f a function of X

X = sym.Symbol('X')

if symbolic:

h = sym.Symbol('h')

else:

h = Omega_e[1] - Omega_e[0]

x = (Omega_e[0] + Omega_e[1])/2 + h/2*X # mapping

f = f.subs('x', x) # substitute mapping formula for x

detJ = h/2 # dx/dX

for r in range(n):

b_e[r] = sym.integrate(f*phi[r]*detJ, (X, -1, 1))

return b_e

Note f.subs('x', x): replace x by \( x(X) \) such that f contains X

sympy always succeedssympy then returns an Integral object instead of a number)

def element_vector(f, phi, Omega_e, symbolic=True):

...

I = sym.integrate(f*phi[r]*detJ, (X, -1, 1)) # try...

if isinstance(I, sym.Integral):

h = Omega_e[1] - Omega_e[0] # Ensure h is numerical

detJ = h/2

integrand = sym.lambdify([X], f*phi[r]*detJ)

I = sym.mpmath.quad(integrand, [-1, 1])

b_e[r] = I

...

def assemble(nodes, elements, phi, f, symbolic=True):

N_n, N_e = len(nodes), len(elements)

zeros = sym.zeros if symbolic else np.zeros

A = zeros((N_n, N_n))

b = zeros((N_n, 1))

for e in range(N_e):

Omega_e = [nodes[elements[e][0]], nodes[elements[e][-1]]]

A_e = element_matrix(phi, Omega_e, symbolic)

b_e = element_vector(f, phi, Omega_e, symbolic)

for r in range(len(elements[e])):

for s in range(len(elements[e])):

A[elements[e][r],elements[e][s]] += A_e[r,s]

b[elements[e][r]] += b_e[r]

return A, b

if symbolic:

c = A.LUsolve(b) # sympy arrays, symbolic Gaussian elim.

else:

c = np.linalg.solve(A, b) # numpy arrays, numerical solve

Note: the symbolic computation of A, b and A.LUsolve(b)

can be very tedious.

>>> h, x = sym.symbols('h x')

>>> nodes = [0, h, 2*h]

>>> elements = [[0, 1], [1, 2]]

>>> phi = basis(d=1)

>>> f = x*(1-x)

>>> A, b = assemble(nodes, elements, phi, f, symbolic=True)

>>> A

[h/3, h/6, 0]

[h/6, 2*h/3, h/6]

[ 0, h/6, h/3]

>>> b

[ h**2/6 - h**3/12]

[ h**2 - 7*h**3/6]

[5*h**2/6 - 17*h**3/12]

>>> c = A.LUsolve(b)

>>> c

[ h**2/6]

[12*(7*h**2/12 - 35*h**3/72)/(7*h)]

[ 7*(4*h**2/7 - 23*h**3/21)/(2*h)]

>>> nodes = [0, 0.5, 1]

>>> elements = [[0, 1], [1, 2]]

>>> phi = basis(d=1)

>>> x = sym.Symbol('x')

>>> f = x*(1-x)

>>> A, b = assemble(nodes, elements, phi, f, symbolic=False)

>>> A

[ 0.166666666666667, 0.0833333333333333, 0]

[0.0833333333333333, 0.333333333333333, 0.0833333333333333]

[ 0, 0.0833333333333333, 0.166666666666667]

>>> b

[ 0.03125]

[0.104166666666667]

[ 0.03125]

>>> c = A.LUsolve(b)

>>> c

[0.0416666666666666]

[ 0.291666666666667]

[0.0416666666666666]

>>> d=1; N_e=8; Omega=[0,1] # 8 linear elements on [0,1]

>>> phi = basis(d)

>>> f = x*(1-x)

>>> nodes, elements = mesh_symbolic(N_e, d, Omega)

>>> A, b = assemble(nodes, elements, phi, f, symbolic=True)

>>> A

[h/3, h/6, 0, 0, 0, 0, 0, 0, 0]

[h/6, 2*h/3, h/6, 0, 0, 0, 0, 0, 0]

[ 0, h/6, 2*h/3, h/6, 0, 0, 0, 0, 0]

[ 0, 0, h/6, 2*h/3, h/6, 0, 0, 0, 0]

[ 0, 0, 0, h/6, 2*h/3, h/6, 0, 0, 0]

[ 0, 0, 0, 0, h/6, 2*h/3, h/6, 0, 0]

[ 0, 0, 0, 0, 0, h/6, 2*h/3, h/6, 0]

[ 0, 0, 0, 0, 0, 0, h/6, 2*h/3, h/6]

[ 0, 0, 0, 0, 0, 0, 0, h/6, h/3]

Note: do this by hand to understand what is going on!

The minimum storage requirements for the coefficient matrix \( A_{i,j} \):

scipy.sparse packageCompute a mesh with \( N_e \) elements, basis functions of degree \( d \), and approximate a given symbolic expression \( f(x) \) by a finite element expansion \( u(x) = \sum_jc_j\basphi_j(x) \):

import sympy as sym

from fe_approx1D import approximate

x = sym.Symbol('x')

approximate(f=x*(1-x)**8, symbolic=False, d=1, N_e=4)

approximate(f=x*(1-x)**8, symbolic=False, d=2, N_e=2)

approximate(f=x*(1-x)**8, symbolic=False, d=1, N_e=8)

approximate(f=x*(1-x)**8, symbolic=False, d=2, N_e=4)

Let \( \{\xno{i}\}_{i\in\If} \) be the nodes in the mesh. Collocation/interpolation means $$ u(\xno{i})=f(\xno{i}),\quad i\in\If, $$ which translates to $$ \sum_{j\in\If} c_j \basphi_j(\xno{i}) = f(\xno{i}),$$ but \( \basphi_j(\xno{i})=0 \) if \( i\neq j \) so the sum collapses to one term \( c_i\basphi_i(\xno{i}) = c_i \), and we have the result $$ c_i = f(\xno{i}) $$

Same result as the standard finite difference approach, but finite elements define \( u \) also between the \( \xno{i} \) points

Note:

Rewrite the left-hand side of finite element equation no \( i \): $$ h(u_i + \frac{1}{6}(u_{i-1} - 2u_i + u_{i+1})) = [h(u + \frac{h^2}{6}D_x D_x u)]_i $$ This is the standard finite difference approximation of $$ h(u + \frac{h^2}{6}u'')$$

Trapezoidal rule using the nodes: $$ (f,\basphi_i) = \int_\Omega f\basphi_i dx\approx h\half( f(\xno{0})\basphi_i(\xno{0}) + f(\xno{N})\basphi_i(\xno{N})) + h\sum_{j=1}^{N-1} f(\xno{j})\basphi_i(\xno{j}) $$ \( \basphi_i(\xno{j})=\delta_{ij} \), so this formula collapses to one term: $$ (f,\basphi_i) \approx hf(\xno{i}),\quad i=1,\ldots,N-1\thinspace. $$

Same result as in collocation (interpolation) and the finite difference method!

Conclusions:

With Trapezoidal integration of \( (f,\basphi_i) \), the finite element method essentially solve $$ u + \frac{h^2}{6} u'' = f,\quad u'(0)=u'(L)=0, $$ by the finite difference method $$ [u + \frac{h^2}{6} D_x D_x u = f]_i $$

With Simpson integration of \( (f,\basphi_i) \) we essentially solve $$ [u + \frac{h^2}{6} D_x D_x u = \bar f]_i, $$ where $$ \bar f_i = \frac{1}{3}(f_{i-1/2} + f_i + f_{i+1/2}) $$

Note: as \( h\rightarrow 0 \), \( hu''\rightarrow 0 \) and \( \bar f_i\rightarrow f_i \).

So far,

nodes and

elements arrays away and find a more generalized element conceptvertices, cells, dof_mapvertices (equals nodes for P1 elements)cells[e][r] holds global vertex number of

local vertex no r in element e (same as elements for P1 elements)dof_map[e,r] maps local dof r in element e to global dof

number (same as elements for Pd elements)dof_map:

A[dof_map[e][r], dof_map[e][s]] += A_e[r,s]

b[dof_map[e][r]] += b_e[r]

vertices = [0, 0.4, 1]

cells = [[0, 1], [1, 2]]

dof_map = [[0, 1, 2], [2, 3, 4]]

Example: Same mesh, but \( u \) is piecewise constant in each cell (P0 element).

Same vertices and cells, but

dof_map = [[0], [1]]

May think of one node in the middle of each element.

We will hereafter work with cells, vertices, and dof_map.

# Use modified fe_approx1D module

from fe_approx1D_numint import *

x = sym.Symbol('x')

f = x*(1 - x)

N_e = 10

# Create mesh with P3 (cubic) elements

vertices, cells, dof_map = mesh_uniform(N_e, d=3, Omega=[0,1])

# Create basis functions on the mesh

phi = [basis(len(dof_map[e])-1) for e in range(N_e)]

# Create linear system and solve it

A, b = assemble(vertices, cells, dof_map, phi, f)

c = np.linalg.solve(A, b)

# Make very fine mesh and sample u(x) on this mesh for plotting

x_u, u = u_glob(c, vertices, cells, dof_map,

resolution_per_element=51)

plot(x_u, u)

The approximate function automates the steps in the previous slide:

from fe_approx1D_numint import *

x=sym.Symbol("x")

for N_e in 4, 8:

approximate(x*(1-x), d=0, N_e=N_e, Omega=[0,1])

Accurate approximation of the integral:

u_glob, returns x and u)

We need a version of the Trapezoidal rule valid for non-uniformly spaced points: $$ \int_\Omega g(x) dx \approx \sum_{j=0}^{n-1} \half(g(x_j) + g(x_{j+1}))(x_{j+1}-x_j)$$

# Given c, compute x and u values on a very fine mesh

x, u = u_glob(c, vertices, cells, dof_map,

resolution_per_element=101)

# Compute the error on the very fine mesh

e = f(x) - u

e2 = e**2

# Vectorized Trapezoidal rule

E = np.sqrt(0.5*np.sum((e2[:-1] + e2[1:])*(x[1:] - x[:-1]))

Theory and experiments show that the least squares or projection/Galerkin method in combination with Pd elements of equal length \( h \) has an error $$ ||e||_{L^2} = Ch^{d+1} $$ where \( C \) depends on \( f \), but not on \( h \) or \( d \).

Derivatives as unknowns ensure the same \( \basphi_i'(x) \) value at nodes and thereby continuous derivatives.

4 constraints on \( \refphi_r \) (1 for dof \( r \), 0 for all others):

Simplest possibility: the Midpoint rule, $$ \int_{-1}^{1} g(X)dX \approx 2g(0),\quad \bar X_0=0,\ w_0=2, $$

Exact for linear integrands

Simpson's rule: $$ \int_{-1}^{1} g(X)dX \approx \frac{1}{3}\left(g(-1) + 4g(0) + g(1)\right), $$ where $$ \bar X_0=-1,\ \bar X_1=0,\ \bar X_2=1,\ w_0=w_2=\frac{1}{3},\ w_1=\frac{4}{3} $$

All the concepts and algorithms developed for approximation of 1D functions \( f(x) \) can readily be extended to 2D functions \( f(x,y) \) and 3D functions \( f(x,y,z) \). Key formulas stay the same.

Inner product in 2D: $$ (f,g) = \int_\Omega f(x,y)g(x,y) dx dy $$

Least squares and project/Galerkin lead to a linear system $$ \begin{align*} \sum_{j\in\If} A_{i,j}c_j &= b_i,\quad i\in\If\\ A_{i,j} &= (\baspsi_i,\baspsi_j)\\ b_i &= (f,\baspsi_i) \end{align*} $$

Challenge: How to construct 2D basis functions \( \baspsi_i(x,y) \)?

Use a 1D basis for \( x \) variation and a similar for \( y \) variation: $$ \begin{align} V_x &= \mbox{span}\{ \hat\baspsi_0(x),\ldots,\hat\baspsi_{N_x}(x)\} \label{fem:approx:2D:Vx}\\ V_y &= \mbox{span}\{ \hat\baspsi_0(y),\ldots,\hat\baspsi_{N_y}(y)\} \label{fem:approx:2D:Vy} \end{align} $$

The 2D vector space can be defined as a tensor product \( V = V_x\otimes V_y \) with basis functions $$ \baspsi_{p,q}(x,y) = \hat\baspsi_p(x)\hat\baspsi_q(y) \quad p\in\Ix,q\in\Iy\tp $$

Given two vectors \( a=(a_0,\ldots,a_M) \) and \( b=(b_0,\ldots,b_N) \) their outer tensor product, also called the dyadic product, is \( p=a\otimes b \), defined through $$ p_{i,j}=a_ib_j,\quad i=0,\ldots,M,\ j=0,\ldots,N\tp$$ Note: \( p \) has two indices (as a matrix or two-dimensional array)

Example: 2D basis as tensor product of 1D spaces, $$ \baspsi_{p,q}(x,y) = \hat\baspsi_p(x)\hat\baspsi_q(y), \quad p\in\Ix,q\in\Iy$$

The 2D basis can employ a double index and double sum: $$ u = \sum_{p\in\Ix}\sum_{q\in\Iy} c_{p,q}\baspsi_{p,q}(x,y) $$

Or just a single index: $$ u = \sum_{j\in\If} c_j\baspsi_j(x,y)$$

with an index mapping \( (p,q)\rightarrow i \): $$ \baspsi_i(x,y) = \hat\baspsi_p(x)\hat\baspsi_q(y), \quad i=p (N_y+1) + q\hbox{ or } i=q (N_x+1) + p $$

In 1D we use the basis $$ \{ 1, x \} $$

2D tensor product (all combinations): $$ \baspsi_{0,0}=1,\quad \baspsi_{1,0}=x, \quad \baspsi_{0,1}=y, \quad \baspsi_{1,1}=xy $$ or with a single index: $$ \baspsi_0=1,\quad \baspsi_1=x, \quad \baspsi_2=y,\quad\baspsi_3 =xy $$

See notes for details of a hand-calculation.

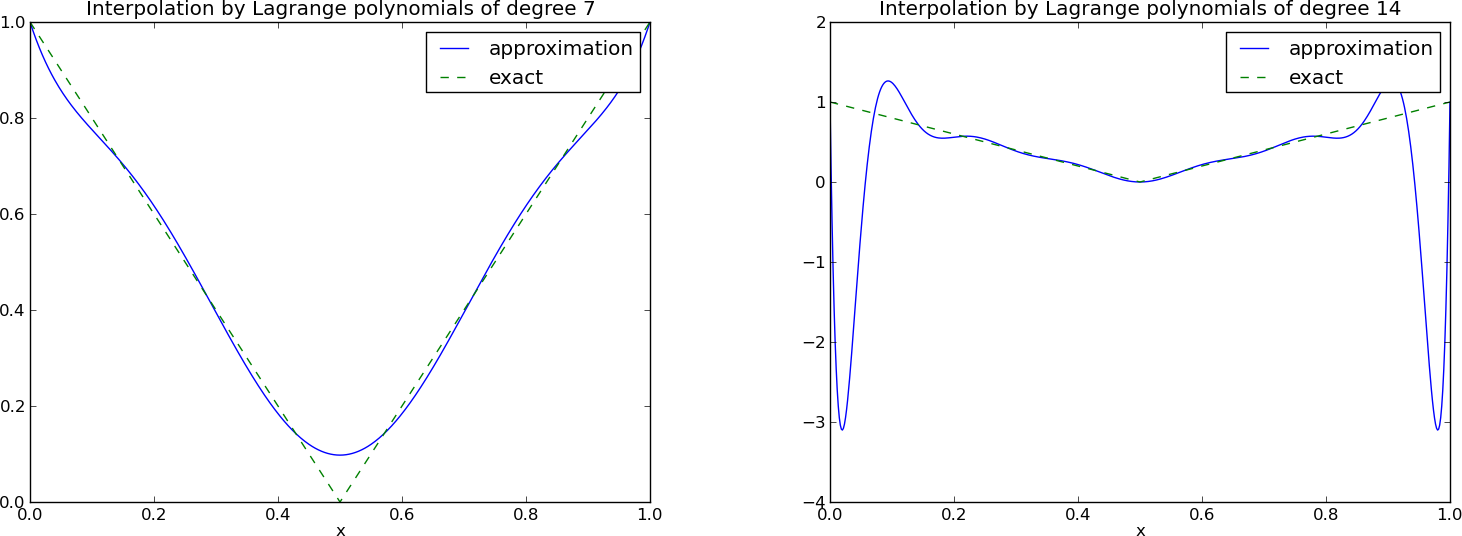

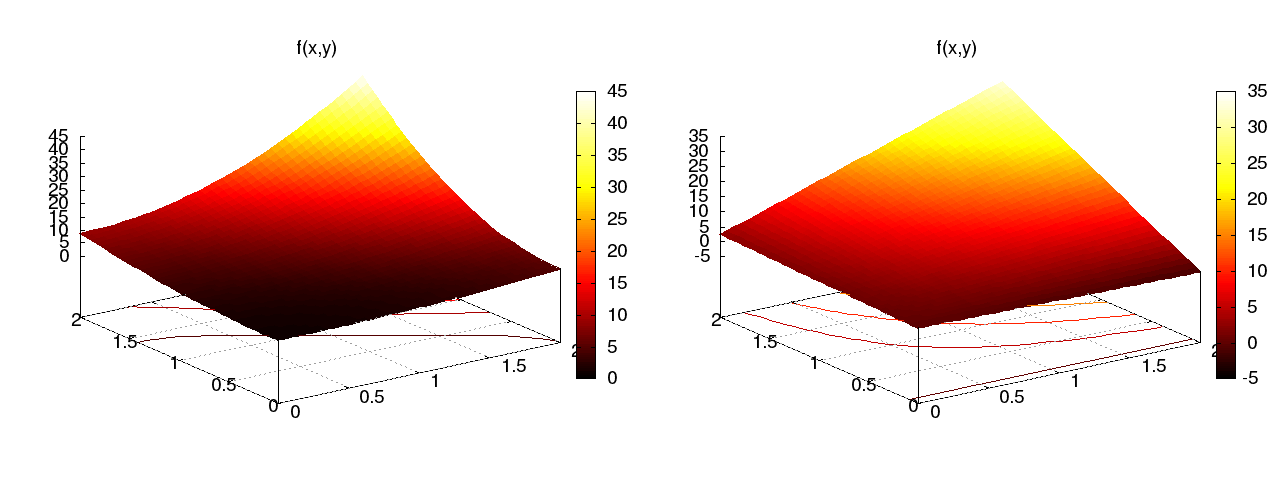

Quadratic \( f(x,y) = (1+x^2)(1+2y^2) \) (left), bilinear \( u \) (right):

Very small modification of approx1D.py:

Omega = [[0, L_x], [0, L_y]]

import sympy as sym

integrand = psi[i]*psi[j]

I = sym.integrate(integrand,

(x, Omega[0][0], Omega[0][1]),

(y, Omega[1][0], Omega[1][1]))

# Fall back on numerical integration if symbolic integration

# was unsuccessful

if isinstance(I, sym.Integral):

integrand = sym.lambdify([x,y], integrand)

I = sym.mpmath.quad(integrand,

[Omega[0][0], Omega[0][1]],

[Omega[1][0], Omega[1][1]])

Tensor product of 1D "Taylor-style" polynomials \( x^i \):

def taylor(x, y, Nx, Ny):

return [x**i*y**j for i in range(Nx+1) for j in range(Ny+1)]

Tensor product of 1D sine functions \( \sin((i+1)\pi x) \):

def sines(x, y, Nx, Ny):

return [sym.sin(sym.pi*(i+1)*x)*sym.sin(sym.pi*(j+1)*y)

for i in range(Nx+1) for j in range(Ny+1)]

Complete code in approx2D.py

\( f(x,y) = (1+x^2)(1+2y^2) \)

>>> from approx2D import *

>>> f = (1+x**2)*(1+2*y**2)

>>> psi = taylor(x, y, 1, 1)

>>> Omega = [[0, 2], [0, 2]]

>>> u, c = least_squares(f, psi, Omega)

>>> print u

8*x*y - 2*x/3 + 4*y/3 - 1/9

>>> print sym.expand(f)

2*x**2*y**2 + x**2 + 2*y**2 + 1

Add higher powers to the basis such that \( f\in V \):

>>> psi = taylor(x, y, 2, 2)

>>> u, c = least_squares(f, psi, Omega)

>>> print u

2*x**2*y**2 + x**2 + 2*y**2 + 1

>>> print u-f

0

Expected: \( u=f \) when \( f\in V \)

Key idea: $$ V = V_x\otimes V_y\otimes V_z$$

$$ \begin{align*} a^{(q)} &= (a^{(q)}_0,\ldots,a^{(q)}_{N_q}),\quad q=0,\ldots,m\\ p &= a^{(0)}\otimes\cdots\otimes a^{(m)}\\ p_{i_0,i_1,\ldots,i_m} &= a^{(0)}_{i_1}a^{(1)}_{i_1}\cdots a^{(m)}_{i_m} \end{align*} $$

The two great advantages of the finite element method:

2D:

The P1 triangular 2D element: \( u \) is linear \( ax + by + c \) over each triangular cell

Higher-degree \( \refphi_r \) introduce more nodes (dof = node values)

Mapping of local \( \X = (X,Y) \) coordinates in the reference cell to global, physical \( \x = (x,y) \) coordinates: $$ \begin{equation} \x = \sum_{r} \refphi_r^{(1)}(\X)\xdno{q(e,r)} \label{fem:approx:fe:affine:map} \end{equation} $$

where

Idea: Use the basis functions of the element (not only the P1 functions) to map the element $$ \x = \sum_{r} \refphi_r(\X)\xdno{q(e,r)} $$

Advantage: higher-order polynomial basis functions now map the reference cell to a curved triangle or tetrahedron.

Integrals must be transformed from \( \Omega^{(e)} \) (physical cell) to \( \tilde\Omega^r \) (reference cell): $$ \begin{align} \int_{\Omega^{(e)}}\basphi_i (\x) \basphi_j (\x) \dx &= \int_{\tilde\Omega^r} \refphi_i (\X) \refphi_j (\X) \det J\, \dX \label{_auto22}\\ \int_{\Omega^{(e)}}\basphi_i (\x) f(\x) \dx &= \int_{\tilde\Omega^r} \refphi_i (\X) f(\x(\X)) \det J\, \dX \label{_auto23} \end{align} $$ where \( \dx = dx dy \) or \( \dx = dxdydz \) and \( \det J \) is the determinant of the Jacobian of the mapping \( \x(\X) \). $$ J = \left[\begin{array}{cc} \frac{\partial x}{\partial X} & \frac{\partial x}{\partial Y}\\ \frac{\partial y}{\partial X} & \frac{\partial y}{\partial Y} \end{array}\right], \quad \det J = \frac{\partial x}{\partial X}\frac{\partial y}{\partial Y} - \frac{\partial x}{\partial Y}\frac{\partial y}{\partial X} $$

Affine mapping \eqref{fem:approx:fe:affine:map}: \( \det J=2\Delta \), \( \Delta = \hbox{cell volume} \)

Finite elements in 2D and 3D builds on the same ideas and concepts as in 1D, but there is simply much more to compute because the specific mathematical formulas in 2D and 3D are more complicated and the book keeping with dof maps also gets more complicated. The manual work is tedious, lengthy, and error-prone so automation by the computer is a must.